Key Takeaways

- EMT precision is a timing problem first, so waveform checks must focus on early cycles and fast transients.

- High detail modelling earns its cost only when it reproduces limits, logic states, and device interactions seen in recordings.

- A small set of repeatable waveform checks will keep event recreation honest and reviewable.

Accurate event recreation lets you replay a disturbance and trust the cause you identify. Published estimates place the annual U.S. cost of power outages between $28 billion and $169 billion, so wrong findings cost real time and money. You can’t fix what you can’t explain. EMT precision turns waveforms into evidence.

EMT precision matters because disturbances live in timing, not averages. A replay that matches RMS values but misses the first cycles will point you at the wrong device or setting. High detail modelling adds effort, so it needs checks you can run and repeat. The goal stays simple: match the waveform parts your study will use.

EMT accuracy defines how closely simulations reproduce electrical events

EMT accuracy means your simulated voltage and current traces match measured waveforms on the same timeline. The match has to hold before the disturbance, during the first cycles, and through recovery. Phase, polarity, and sequence must line up, not just magnitude. If those checks fail, event recreation becomes unreliable.

A common case is replaying a feeder fault captured at a substation. You align pre fault loading, apply the fault at the recorded time, and compare the voltage dip depth against the recorder. You also check current peaks and their decay, since DC offset and saturation shape early cycles. The recovery shape matters too, such as a slow return linked to stalled motors.

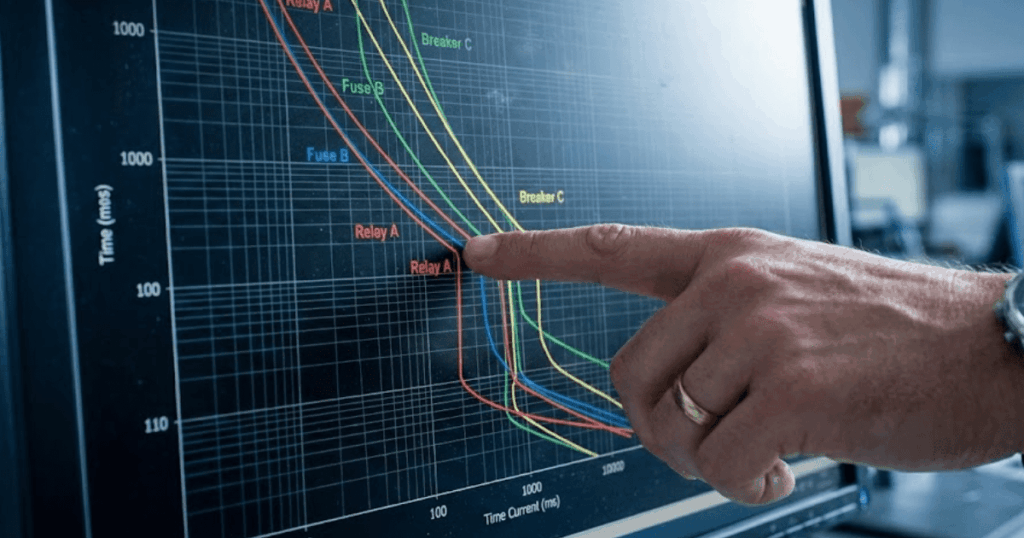

Accuracy is a set of pass/fail checks tied to what you need to decide next. Protection studies care about the first cycles because pickup and trip logic live there. Control studies care about the next few hundred milliseconds where limiters and synchronizing logic settle. Treat accuracy as a checklist, and your disturbance reproduction stays repeatable. It also keeps debates focused on measurable gaps.

“EMT precision turns waveforms into evidence.”

Precise event recreation depends on capturing fast switching and transients

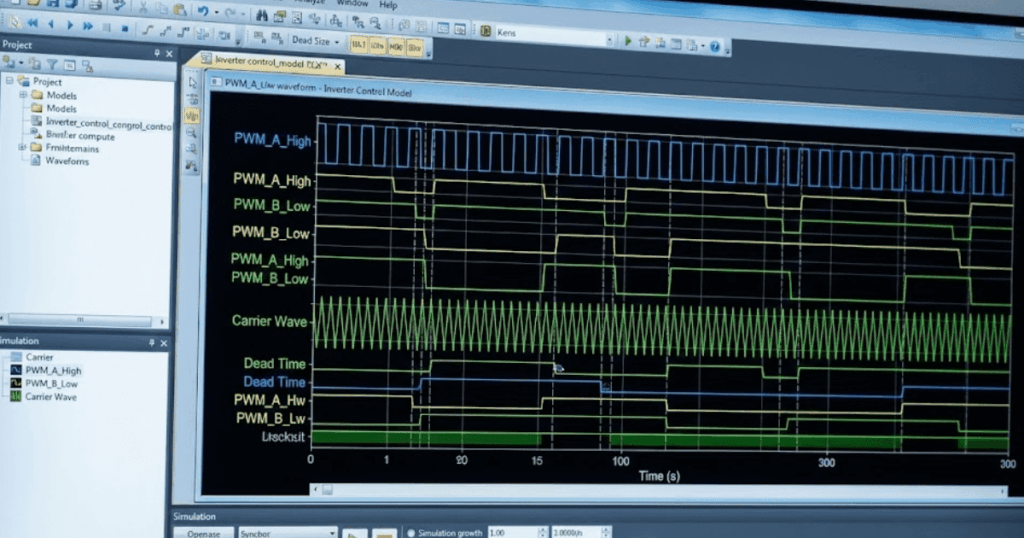

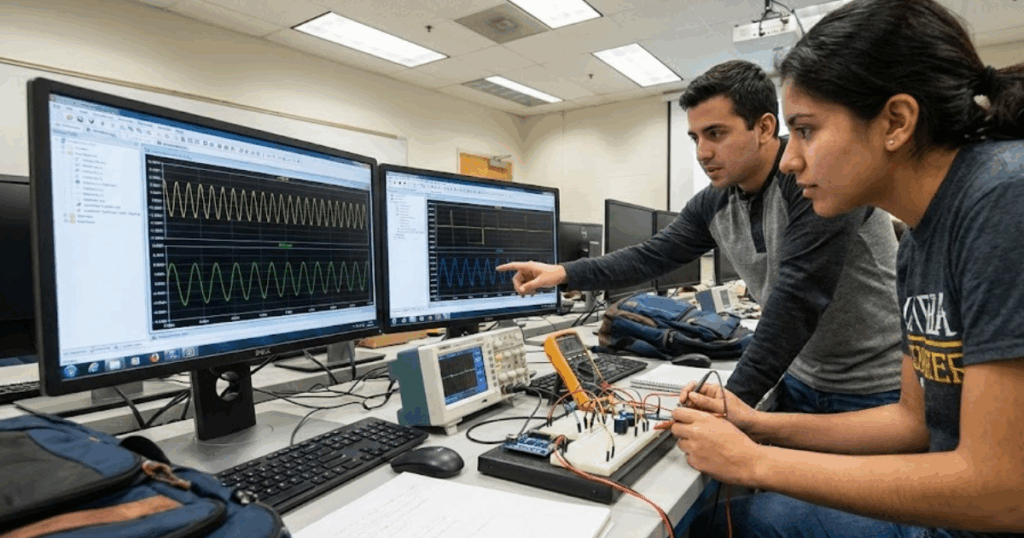

Precise event recreation depends on capturing the fast physics that shape the first milliseconds. EMT precision comes from modelling switching, conduction states, saturation, and line effects at a time step that can resolve them. Some inverter connected generator models run with time steps as low as 1–2 µs, which shows how quickly key dynamics move. Coarser steps will blur peaks and shift event timing.

Capacitor bank switching is a clear illustration. The recorder often shows a voltage spike and bus ringing, not a clean step. Matching that ringing needs correct capacitor and reactor values, realistic upstream impedance, and a switch model that represents the closing instant. Small timing error will move the peak enough to break the match.

Transformer energization, breaker pole timing, and cable energization also create short bursts that set initial conditions. A replay can look close after 200 ms, yet internal controller states will already be wrong. Treat the first milliseconds as a gate check. That habit prevents long, late-night tuning sessions.

High detail modelling reveals disturbance behavior hidden by averaged models

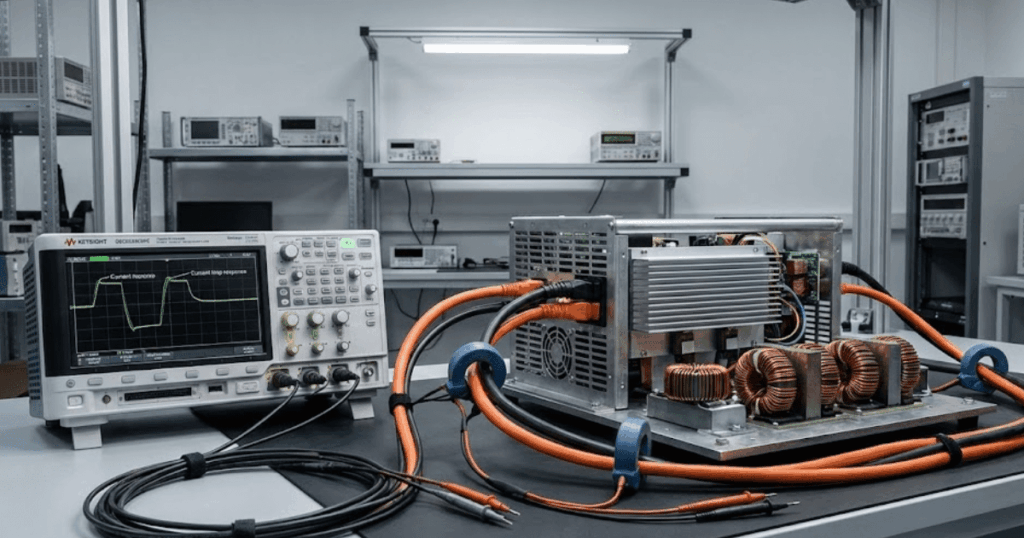

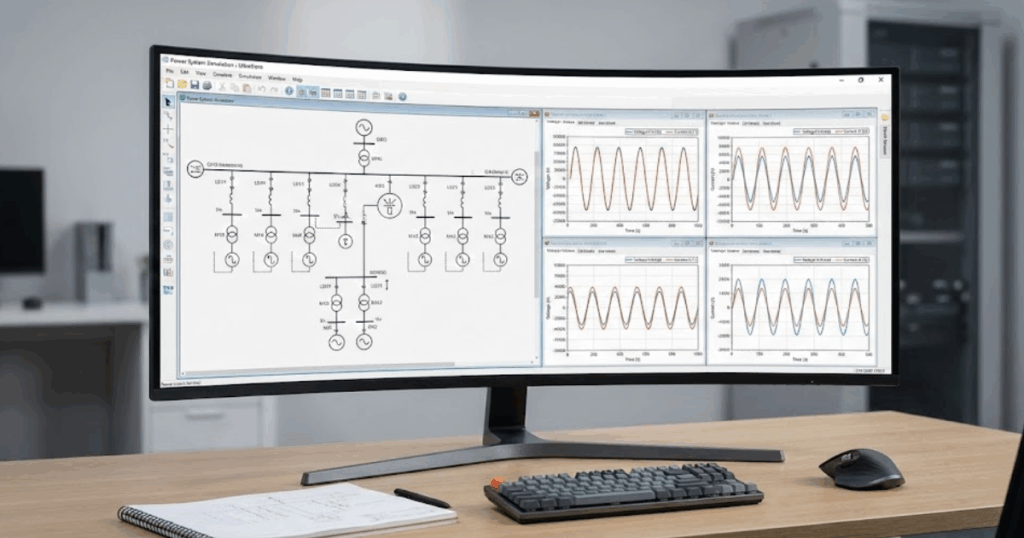

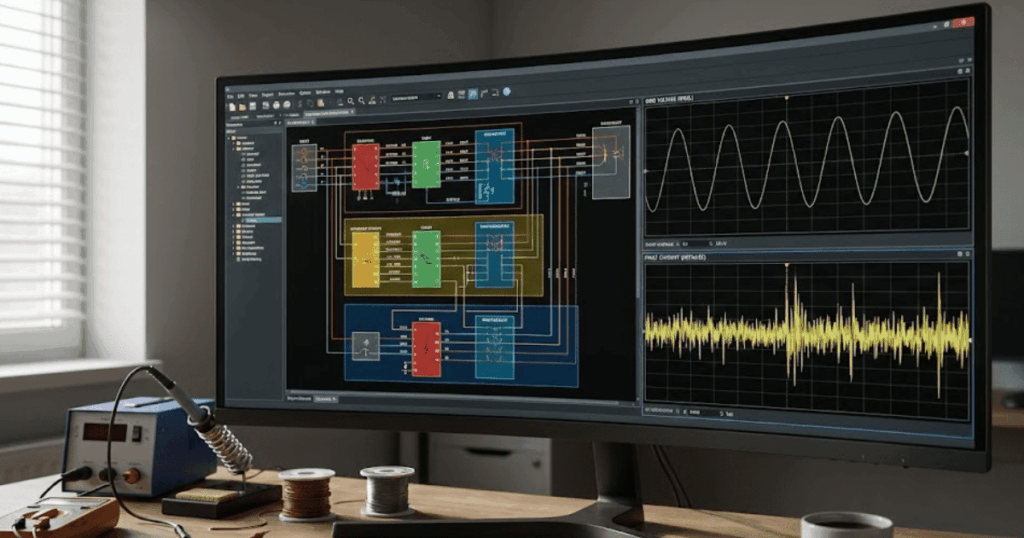

High detail modelling reveals behavior that averaged models hide when limits and nonlinearities dominate. EMT will show current clipping, phase jumps, harmonic injection, and brief control mode switches that are smoothed out in averaged representations. Those details decide if equipment rides through, trips, or recovers cleanly. If the disturbance reproduction needs that decision, you need EMT detail.

An inverter ride through event during a close in fault shows the difference fast. An averaged model can hold current proportional to voltage and recover smoothly once voltage returns. A detailed EMT model will show current limiting, mode switching, and a short oscillation as synchronizing logic re locks. That short window can explain either a second protection pickup or a negative-sequence current spike.

Detail also exposes interaction between devices. Two converters can look stable in isolation and still fight through a weak network, producing repeated limiter hits after clearing. With EMT detail, you can test fixes you can actually implement, such as adjusting a current limit ramp. Without it, you’ll tune a model to match a story, not the event.

Accurate EMT results improve fault analysis and protection coordination studies

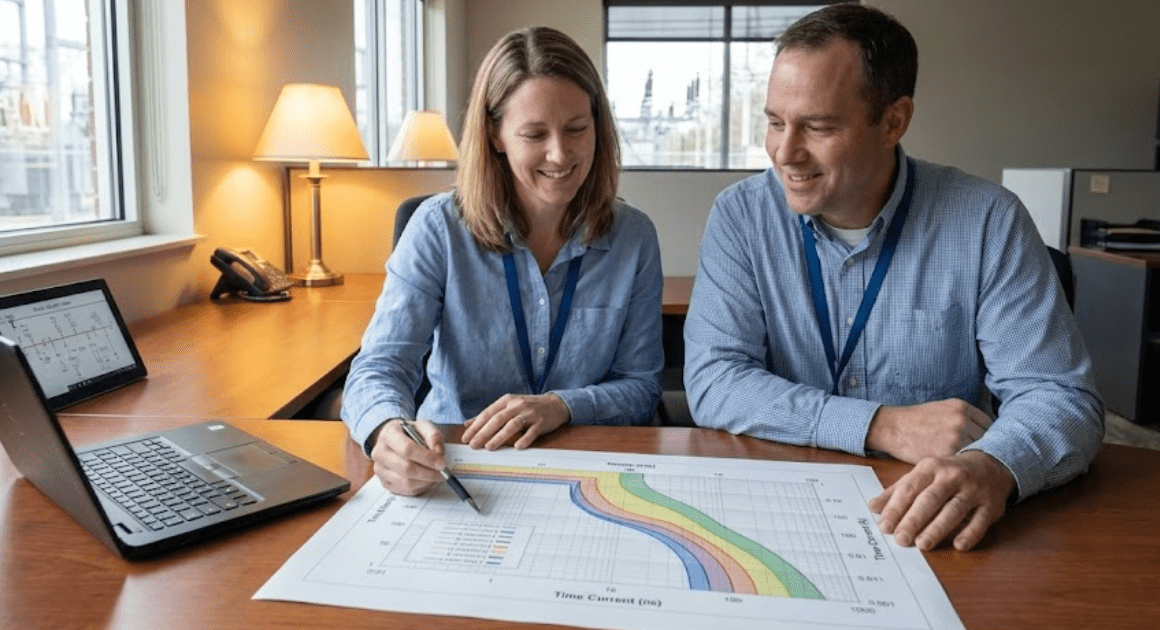

Accurate EMT results improve fault analysis because protection responds to waveform features rather than just RMS values. Relays react to peaks, DC offset, harmonic content, and phase angle shifts. If the replay captures those features, you can test settings changes with confidence. If it does not, you will tune protection to a waveform that never occurred.

A feeder relay that mis operated during a temporary fault and reclose is a practical example. The recorder shows fault current, then transformer inrush after reclose, plus a voltage sag that lasted long enough to trip an undervoltage element. An EMT recreation can separate those contributors at the same bus, including converter current limits that deepen the sag for a few cycles. Once timing is clear, you can adjust delays, pickups, or blocking logic in line with the record.

Coordination also depends on consistency across cases. If the model matches one fault record but fails on a second event elsewhere, topology or equivalents are wrong. EMT makes that gap obvious because it won’t hide timing errors behind averages. That clarity speeds up root cause work. It also reduces risky “trial and error” tuning.

Event replay quality shapes confidence in post incident engineering findings

Replay quality shapes what you will believe after an incident, because familiar looking waveforms feel convincing. A plausible but wrong replay will steer you toward the wrong cause and corrective action. A disciplined replay forces hard questions early, such as breaker status, event time stamps, and controller revision. That discipline turns event recreation into a reliable engineering tool.

A plant trip during a voltage dip shows why. Measured voltage returns, yet the plant stays offline and the operator log shows a latch. A low detail model can’t latch because internal state logic is missing, so the replay suggests the plant should have stayed online. A precise EMT replay that includes latch and reset conditions will reproduce the lockout and show the threshold crossing that triggered it.

The confidence bar should match the consequence of the finding. If the outcome warrants a retrofit, a settings change, or a compliance filing, the replay must stand up to review. Clear assumptions and repeatable waveform checks make that possible. Strong replay quality shortens debate and keeps focus on fixes.

“EMT makes that gap obvious because it won’t hide timing errors behind averages.”

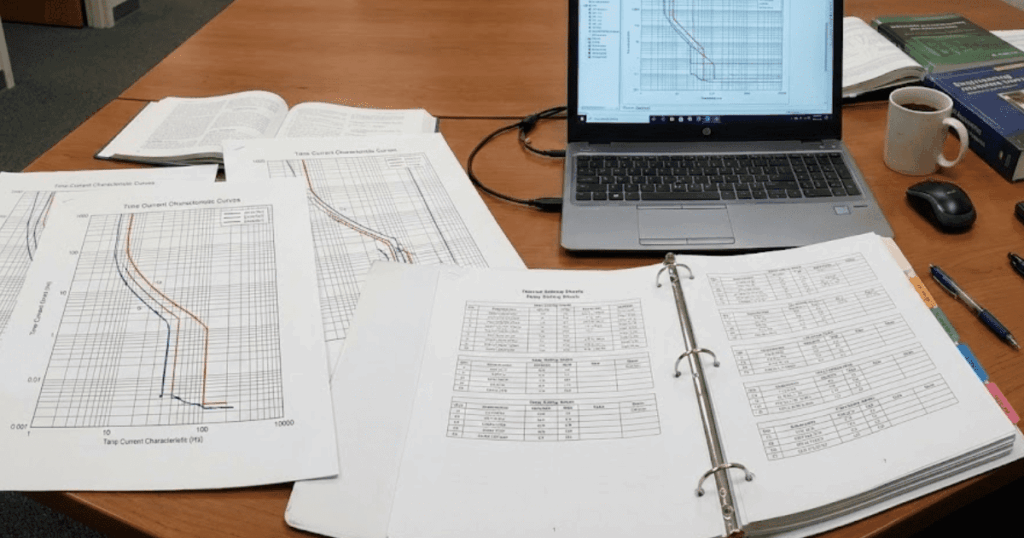

Engineers should prioritize EMT detail based on disturbance study objectives

Better results come from prioritizing EMT detail around the disturbance you need to explain. Start with the signals that must match, then keep explicit models for the devices that shape those signals. Reduce everything else only when the reduction preserves transient response at your observation points. This focus controls model size and keeps run time under control.

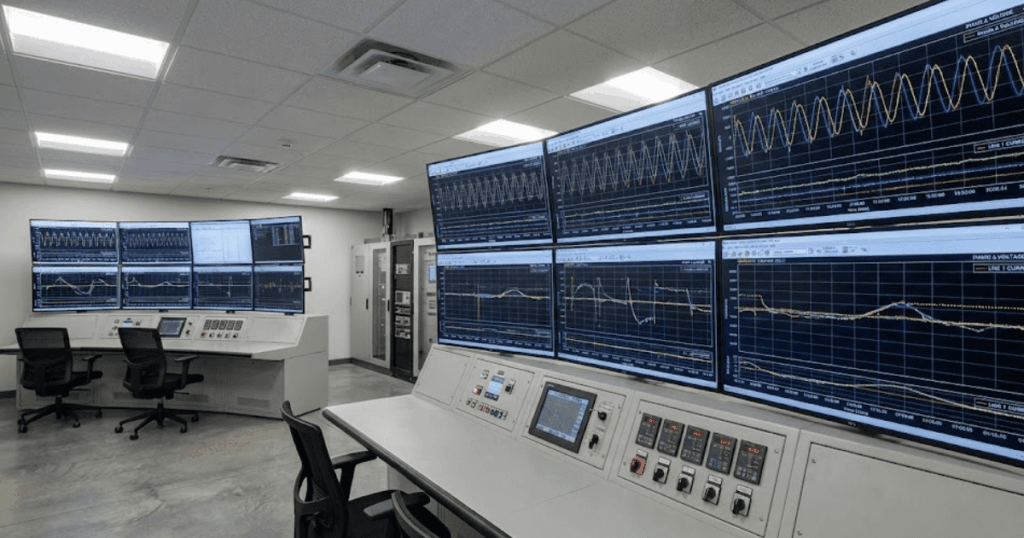

A breaker operation at one bus needs detailed switching and nearby network impedance, not full detail everywhere. A corridor interaction between two converter plants needs detailed controls at both ends and enough network detail to preserve coupling. Teams using SPS SOFTWARE often formalize this workflow: define waveform checks, add detail until checks pass, then stop. That habit keeps modelling effort traceable, and it makes peer review simpler.

| Study objective | Waveform checks to pass | Detail that usually matters |

| Relay pickup timing | Early cycles current and voltage | Saturation and DC offset |

| Converter ride through | Current limit and recovery | Control mode switching |

| Switching surge | Peak voltage and ringing | Switch and line detail |

| Fault location | Dip depth and phase shift | Topology and impedance |

| Lockout replay | Threshold crossings | Logic and timers |

Common modelling shortcuts that reduce event recreation fidelity

Event recreation fails most often because small shortcuts stack up until timing no longer matches the record. The plots can still look smooth, so the error hides until pickup or latch behavior shows up in the field and not in the simulation. You avoid most failures by treating each shortcut as a hypothesis with a check. If the check fails, the shortcut goes.

Five shortcuts cause repeat problems in disturbance reproduction:

- Using a time step too large for switching or saturation

- Replacing controls with fixed current sources or gains

- Omitting transformer saturation, inrush, or frequency effects

- Ignoring event timing details such as pole scatter and delays

- Forcing initial conditions that don’t match pre fault flows

Each shortcut breaks a different part of the replay, and the fix is clear once you see the mismatch. A too large time step will shift peaks and pickup times. Missing logic will erase latches and resets that operators see in logs. Teams that keep non negotiable waveform checks will stay honest over time. SPS SOFTWARE fits naturally when you need transparent, editable models you can inspect as carefully as you inspect the recordings.