Key Takeaways

- Lock device data and fault levels before coordination tuning starts.

- Write the primary and backup intents per zone so protection timing remains consistent.

- Rerun curves and scenarios after each network or setting change to prevent drift.

Relay coordination clears faults fast. Healthy loads stay on. Inputs must be right for time current curves. Clear intent keeps timing steady. Most errors come from stale device data. Copied settings add risk. Curve checks tie results to actual trips. Notes keep settings defensible.

What defines an effective relay coordination study

An effective relay coordination study shows that the correct device trips first in the states you run. Device data and fault levels are verified. Time current curves show the needed separation. Notes explain why pickup and delays exist.

Use a long radial feeder with a midline recloser for testing. End-of-line faults sit near pickup and expose crossings. Coordination that holds at one fault point will fail later. A setting with no reason will force a repeat study.

7 ways to improve relay coordination studies

Lock inputs first. Use curves as checks. Keep each item single. Work in order.

| Start with verified system data and consistent short circuit assumptions | Relay coordination fails when device data or fault levels are wrong, so validating inputs first prevents false confidence in curve spacing. |

| Define protection objectives before touching time current curves | Clear primary and backup intent gives protection timing a purpose and prevents random or copied settings. |

| Establish clear coordination margins across all protection zones | Consistent time margins account for breaker operation, tolerances, and delays so backup devices still wait when they should. |

| Use time current curves to expose grading conflicts early | Plotting curves across the full fault range reveals miscoordination that numerical checks alone will miss. |

| Tune protection timing from the load outward, not relay by relay | Setting downstream devices first reduces rework and keeps upstream coordination stable as adjustments are made. |

| Validate coordination across normal, contingency, and fault cases | Testing multiple operating states ensures coordination holds when the system configuration changes. |

| Reconfirm coordination after setting changes or network modifications | Any system or setting change can disrupt coordination, so rechecking curves helps prevent gradual protection drift. |

1. Start with verified system data and consistent short circuit assumptions

Verified inputs are the fastest path to relay coordination. Confirm CT and PT ratios, breaker types, fuse links, xfmr impedances, grounding, and any motor or inverter fault contribution you include. A feeder relay set from a drawing that still shows an old CT ratio will coordinate on screen and trip late on site. Check xfmr tap position and source strength so short circuit levels match what the yard will see. Keep one fault basis for the tuning run so every time current curve uses the same fault levels. Track a source and date for each device record so updates don’t become guesswork. Rerun remote-end faults on long feeders after every model update, because weak faults always expose curve crossings first.

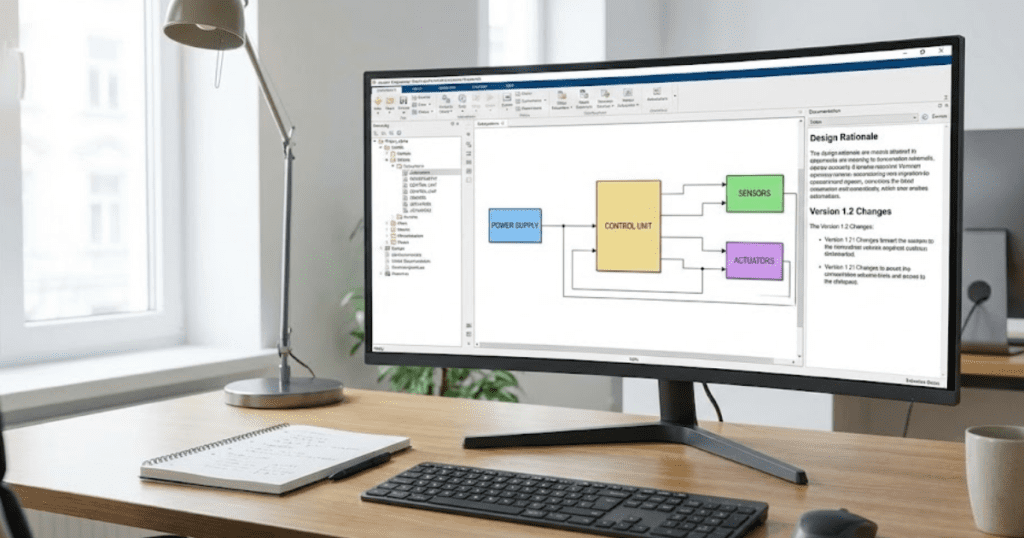

2. Define protection objectives before touching time current curves

Protection timing only makes sense after you state the protection objective. Write which device must act first for each zone and fault type, and what backup action you accept if the primary fails. A fuse-saving feeder will use a fast reclose shot, while a cable feeder will avoid reclosing and accept slower backup. If arc-flash limits matter, note the maximum acceptable clearing time at each bus before tuning. Those choices set pickup, delay, and instantaneous reach. An upstream relay should wait for downstream devices to report line faults, but act quickly for bus faults. Without it, settings get copied and schemes drift quietly later. Keep the objective note beside the time-current curves so “faster” requests don’t compromise selectivity.

“Without it, settings get copied and schemes drift quietly later.”

3. Establish clear coordination margins across all protection zones

Coordination margins turn “curves don’t touch” into “backup still waits in service.” Build in room for breaker opening time, fuse-clearing spread, relay tolerances, CT saturation, and any logic delay you add. Don’t forget breaker failure timers, since they add delay to backup clearing even when curves look clean. A lateral fuse with wide melt and clear scatter needs more spacing than a digital relay with tight timing. A recloser fast shot can erase margin if it lands in the same current range as the fuse. Pick one margin rule and apply it across all zones so you don’t end up with one-off exceptions. More margin reduces nuisance trips, but slows backup clearing and raises fault energy when the primary fails.

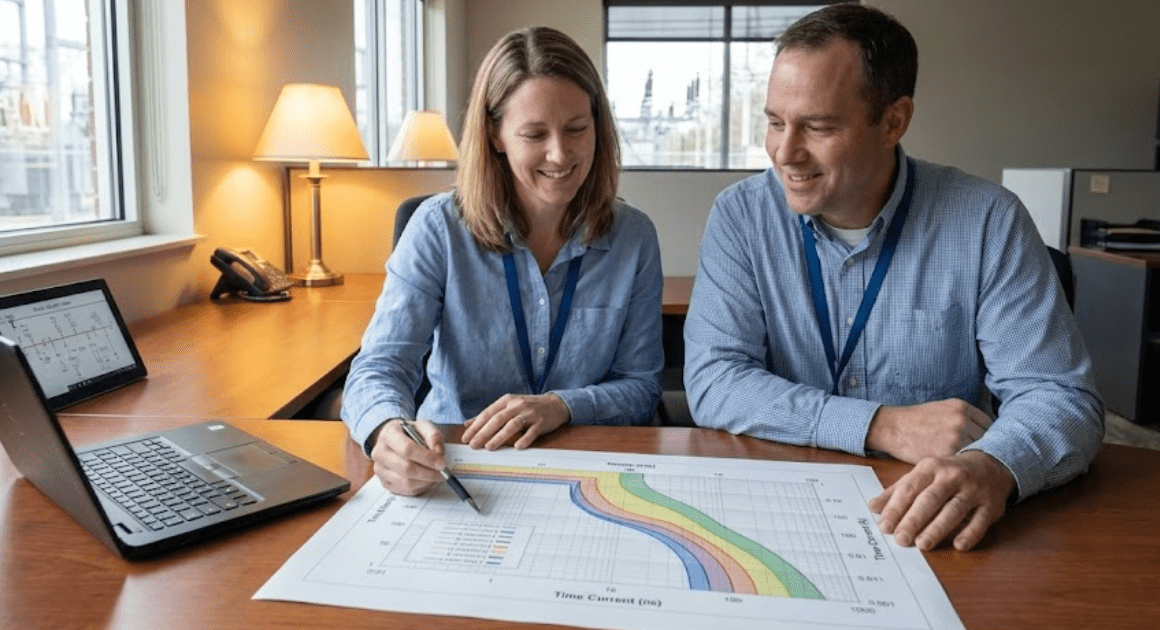

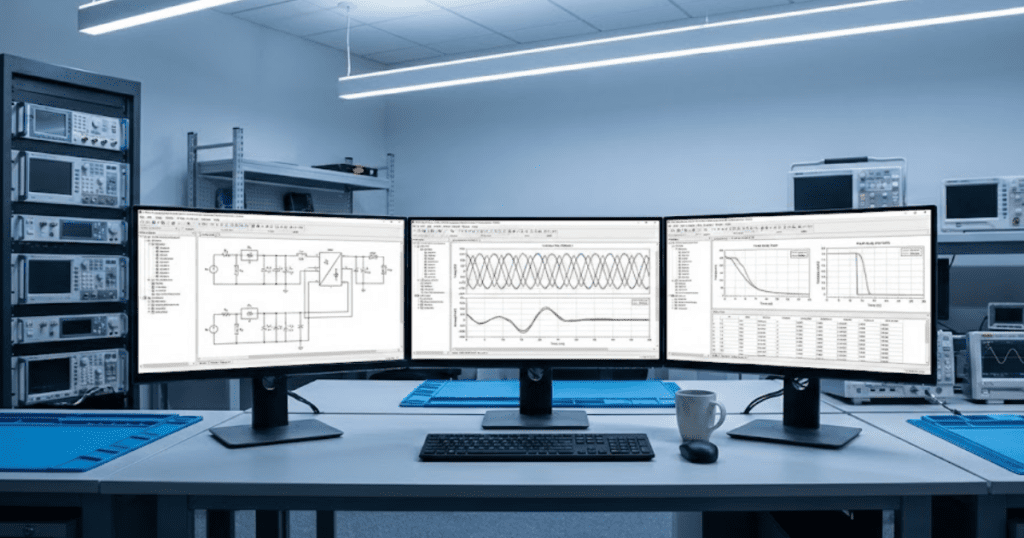

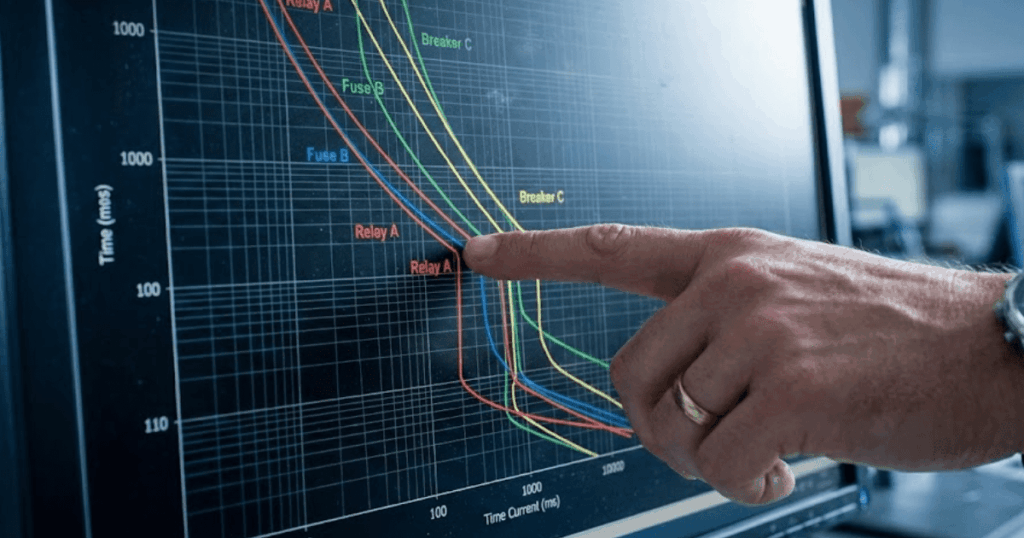

4. Use time current curves to expose grading conflicts early

Time-current curves are most valuable when used to identify grading conflicts early. Overlay each primary device with its backup and scan the full current range, including minimum fault current near the end of the feeder. A xfmr fault can land between pickup and instantaneous and hide a crossing unless you plot that case. Curve crossings near pickup are common on long feeders and high-impedance faults, so don’t stop at high-current points. Instantaneous elements set too low can jump ahead of downstream devices during close-in faults. Mark the currents where coordination must hold so your review stays consistent. When a conflict appears, fix the cause first, such as pickup, delay, or instantaneous reach, before you spread changes everywhere.

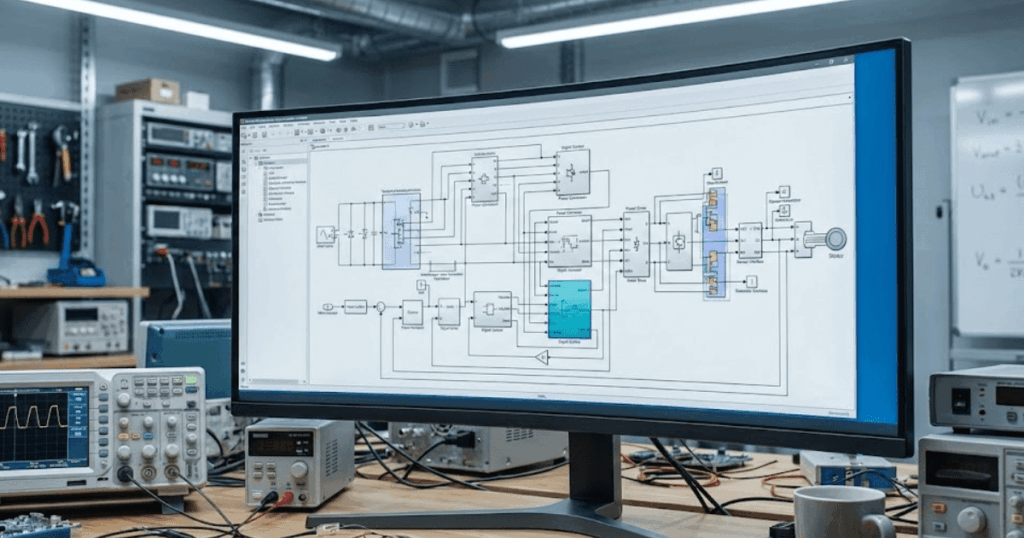

5. Tune protection timing from the load outward, not relay by relay

The cleanest tuning flow runs from the load outward. Set laterals and branch devices first, then set the midline recloser or sectionalizer, then set the feeder relay, and finish with upstream backup. A radial feeder often needs lateral fuses to clear single-phase faults while the main recloser clears temporary faults on the trunk. Starting upstream first forces you to revisit every downstream curve after each tweak. Downstream pickup must ride through load pickup and xfmr energization, or nuisance trips will dominate your tuning time. Cold load pickup after an outage can also look like a fault, so check it first before you tighten pickup too. After downstream settings stabilize, upstream edits become small, and the coordination picture remains readable.

6. Validate coordination across normal, contingency, and fault cases

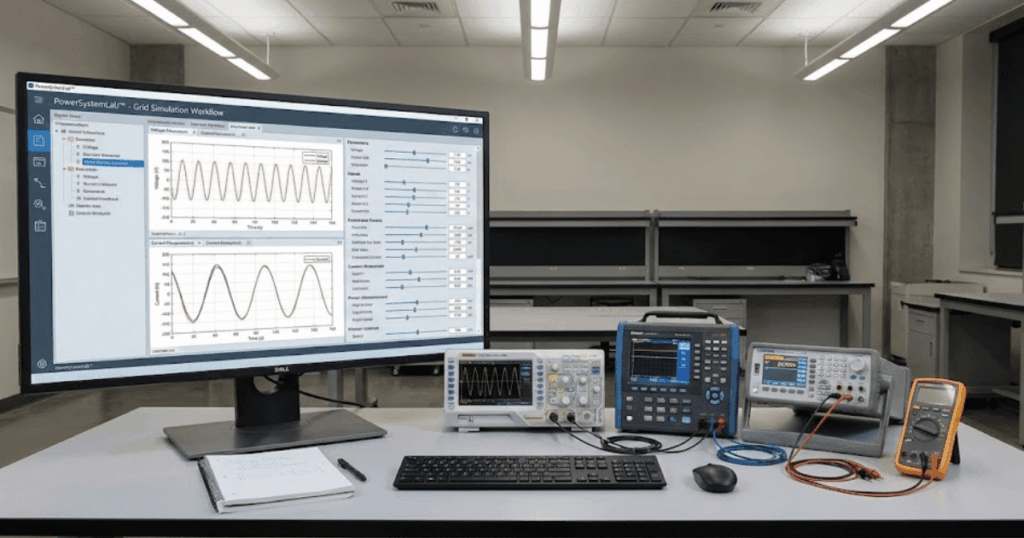

A study that only checks the normal one-line will miss the states that break coordination. Test feeder ties open and closed, a xfmr out of service, minimum and maximum source strength, and generation connected and disconnected. A tie closure can reduce the fault current seen by a downstream device and push it into a slower part of its curve. A generator can reverse current and trip a non-directional element for an upstream fault. Run one weak-fault case and one close-in case so you see both pickup timing and instantaneous reach. Keep the scenario set short but strict, and rerun it after every tuning change. SPS SOFTWARE helps when you need physics-based network behavior and editable protection logic in the same workspace.

7. Reconfirm coordination after setting changes or network modifications

Coordination will drift after every change, even when relay settings stay the same. A new cable, a feeder extension, grounding changes, added capacitance, or a different breaker model will shift fault levels and clearing times. A feeder extension often drops minimum fault current, so end-of-line faults sit closer to pickup and expose curve crossings. A quick setting tweak to stop a nuisance trip can remove spacing you relied on for backup. Keep the previous setting file and curve set so you can roll back if a field test reveals a new problem. Treat updates like controlled changes and record the reason, affected devices, and fault cases rerun. Replot the time current curves after each modification so you can see what moved

Applying these methods to new studies and existing protection schemes

Applying these methods works best when you treat relay coordination as a controlled engineering process rather than a one-time calculation. New studies benefit from a clean sequence where data validation, protection intent, margins, and tuning order are fixed before any curves are adjusted. That structure prevents early choices from forcing compromises later and keeps coordination defensible during reviews.

Existing schemes require more discipline because history works against you. Legacy settings often reflect past outages, rushed fixes, or copied logic from similar feeders. Start by rebuilding the coordination logic using current system data rather than trusting inherited curves. Plot fresh time current curves and compare them against actual operating scenarios, not just the conditions assumed when the settings were first applied.

“That habit keeps reviews short.”

Documentation matters as much as settings. Each pickup, delay, and instantaneous choice should tie back to a protection objective and a verified fault case. When system changes occur, that record makes it clear what must be rechecked and what can remain untouched. Teams using SPS SOFTWARE often keep models, assumptions, and curves linked, which shortens reassessment cycles and reduces debate during approvals.

Over time, disciplined execution shapes outcomes. Coordination schemes that remain stable do so because engineers repeatedly apply the same checks, not because the system stays simple.