Key Takeaways

- Use simulation as a lab method where students predict, validate, and explain system behaviour, not as a plot generator.

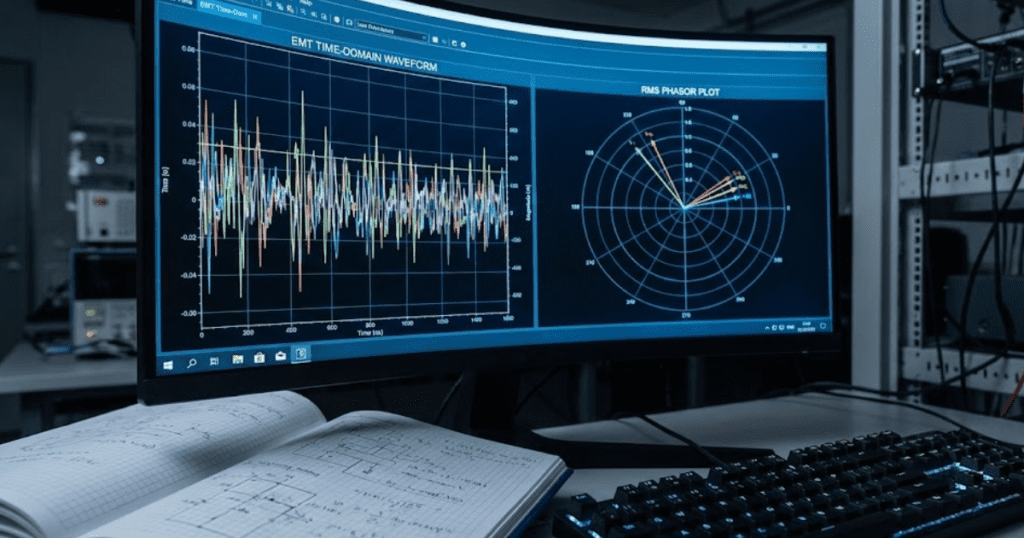

- Select EMT or RMS simulation based on the question and time scale, then require students to state what that model detail cannot represent.

- Keep models physics-based and transparent, and grade validation checks plus reporting quality so results stay defensible and transferable.

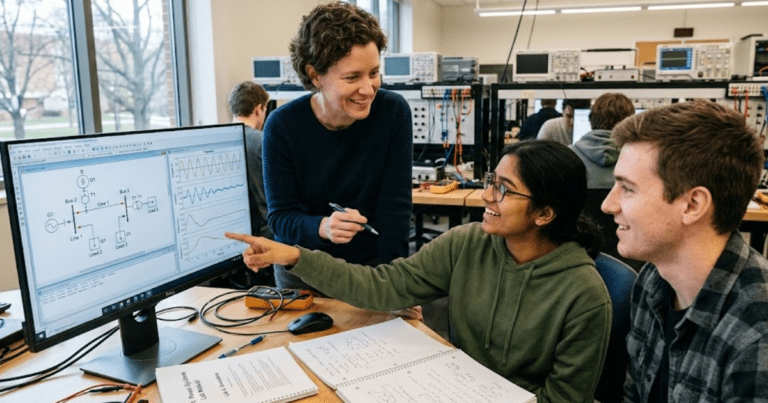

Students learn faster when they must predict, test, and explain results, not just watch a lecture or copy a schematic. A large meta-analysis of 225 STEM studies found active learning raised exam scores by about 6% and cut failure rates by 55%. Simulation fits that pattern when you use it as a structured lab, with checks, limits, and clear reporting. Used as a black box, it does the opposite and trains students to trust plots they cannot defend.

The most effective simulation teaching uses disciplined, physics-based models plus validation habits that students repeat until they become automatic. You’re not trying to replace hardware labs or textbook math. You’re building the missing bridge between them, so learners can reason from assumptions to waveforms, and from waveforms back to engineering choices with confidence.

“Simulation models help students link equations to power system behaviour they can test safely.”

Define what simulation models teach in power system courses

Simulation models teach cause and effect across an electrical network, not just component equations in isolation. Students learn how voltage, current, and power move through a system after a change such as a fault, a switching event, or a control action. The lesson is always conditional on assumptions, so modelling becomes a way to think clearly about limits.

Start by naming the learning target in plain language, then map it to what students must observe. If the target is “fault current depends on network impedance,” the observation is a current waveform and an impedance path, not a completed diagram. If the target is “protection needs selectivity,” the observation is timing and coordination, not a pass or fail result. That framing keeps simulation from becoming a button-click exercise.

Simulation also teaches students what not to assume. Ideal sources, perfect measurements, and lossless components produce clean plots that look correct but teach the wrong instincts. Good course design forces students to track parameter choices, initial conditions, and solver settings, then explain how those choices shape behaviour. That habit pays off later when they face messy field data and conflicting requirements.

Choose EMT and RMS simulation based on learning goals

The main difference between EMT and RMS simulation is the time detail each one keeps, and that detail decides what you can teach. EMT resolves fast electromagnetic transients and switching effects, so it suits converters, harmonics, and protection waveforms. RMS smooths fast dynamics into phasors, so it suits load flow, voltage control, and stability studies across longer time windows.

Use RMS when the lesson is system-level relationships and you need fast runs for many cases, such as parameter sweeps or contingency studies. Use EMT when the lesson depends on waveform shape, switching instants, or control interactions that vanish in a phasor model. Power systems curricula now must treat power electronics as normal grid equipment, not a special topic, since wind and solar produced 13% of global electricity in 2023. That share shows up in control behaviour and fault response, which pushes many teaching labs toward EMT at least some of the time.

Match fidelity to the question you’re asking, then make that match visible to students. When learners can say “RMS hides switching ripple, so I should not interpret this as a harmonic result,” they’ve learned something that transfers. When they cannot, they will misread a plot with total confidence, which is the failure mode to design against.

| What you want students to understand | Model detail that usually fits the task |

| How voltage setpoints and reactive power targets affect a feeder | RMS studies with steady-state or slow control dynamics keep runs fast |

| Why a converter trips during a disturbance despite “normal” power flow | EMT waveform detail captures current limits, control saturation, and switching effects |

| How protection coordination depends on timing and measurement filtering | EMT supports relay inputs and transient behaviour that phasors can hide |

| How operating points shift across many contingencies | RMS lets you run many cases and compare patterns without long runtimes |

| What modelling assumptions change the answer the most | Either approach works if students must justify assumptions and validate outputs |

Plan simulation-based labs that build skills in stages

Simulation labs work best when each lab adds one new modelling skill while keeping the rest familiar. Students need repetition in setup, checking, and reporting, then a controlled increase in complexity. That pacing reduces copy-and-paste work and makes it clear what concept is being tested. The goal is steady competence, not a single impressive capstone run.

Structure each lab around the same workflow so students build habits, then swap the technical content. A simple template keeps attention on the engineering rather than on interface details. A staged plan also makes grading more consistent because artefacts look similar across groups. Use a single lab handout format that always asks for the same five deliverables.

- A one-sentence statement of the system question being tested

- A diagram showing what is modelled and what is omitted

- A short table of key parameters students are allowed to change

- Two validation checks tied to hand calculations or known limits

- A final explanation that connects waveforms to the original question

Staging also protects learning time. Early labs should run quickly and fail predictably when something is wrong, so students can debug with logic rather than guesswork. Later labs can add larger networks, more controls, and more edge cases once students can explain why the earlier models behaved the way they did.

“The most important judgement is simple: simulation is a teaching lab only when students can explain why the model behaves as it does, and when they can show basic evidence that it is not lying.”

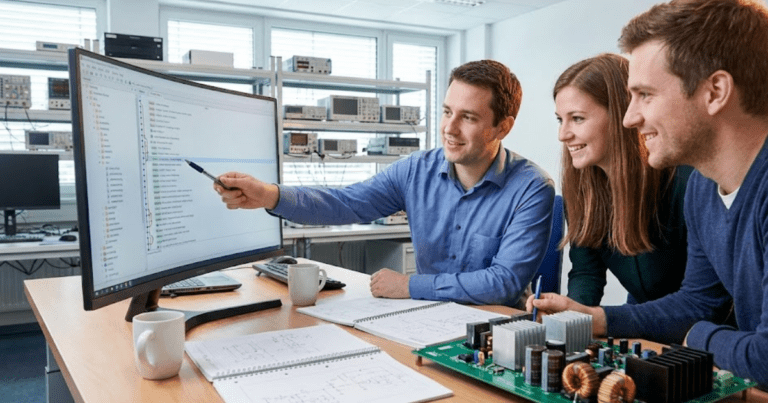

Build physics-based component models students can inspect and change

Students learn modelling when they can see what a component assumes, and they can change parameters without breaking the system. Physics-based components, with transparent equations and clear parameter meaning, turn a simulation into a teachable object. The model becomes a set of claims that students can test, not a sealed artefact that produces plots.

Start with parameter sets that map directly to course concepts, such as R, L, C values, transformer percent impedance, or controller gains with units. Keep names consistent across labs, and require students to state where each value came from, even if it is provided. Ask learners to identify one parameter that affects magnitude, one that affects timing, and one that affects stability, then confirm each with a sensitivity run. That keeps attention on physical meaning instead of on interface clicks.

SPS SOFTWARE supports this style of teaching through open, editable component models and workflows that can align with MATLAB/Simulink model-based design. That matters most when you want students to inspect internals, change assumptions, and defend results line by line. Tool choice still matters less than transparency and discipline, so insist on models your students can read and reason about.

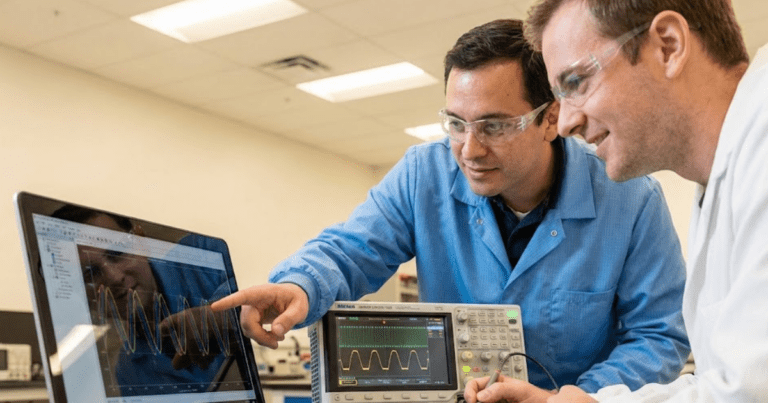

Teach power system behaviour using fault and switching studies

Fault and switching studies teach system behaviour because they expose network limits quickly and visibly. Students see how impedance paths set current, how voltage sags propagate, and how protection and controls interact. These studies also force attention to initial conditions and timing, which are the first places where modelling errors show up. Done well, they convert “rules of thumb” into observable cause and effect.

A concrete lab can use a simple medium-voltage feeder with a source, a transformer, a line, a load, and one breaker. Set an initial steady operating point, apply a single line-to-ground fault at the far end, then clear it with a breaker trip after a set delay. Students compare bus voltages, fault current peak, and energy in inductive elements before and after clearing, then repeat with a different fault resistance and a different trip delay. That single scenario teaches network impedance, protection timing, and transient recovery in one controlled setup.

Keep the teaching focus on interpretation, not on the drama of the waveform. Require students to identify which elements carried the fault current and which ones limited it, using the network diagram and parameter values. Require a short explanation of what would change if the network were weaker or if the load were more inductive, without adding new cases. That approach teaches reasoning, and it keeps the lab within a manageable scope.

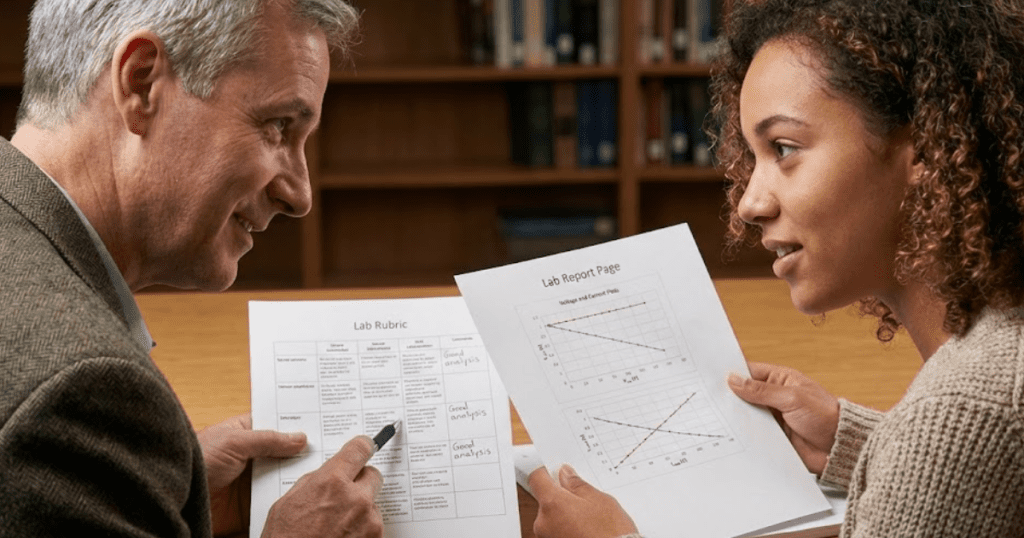

Assess student learning with model validation and reporting rubrics

Assessment should reward correct reasoning and validation, not just a working simulation file. A strong rubric checks if students can confirm units, sanity-check magnitudes, and explain discrepancies between expected and simulated results. That pushes learners to treat simulation outputs as hypotheses that need testing. It also reduces grading noise, since you can score the logic even when minor setup differences exist.

Validation is easiest to teach as a small set of repeatable checks. Require one check before running dynamics, such as confirming power balance at the operating point or matching a hand-calculated short-circuit estimate within a defined tolerance. Require one check after the run, such as verifying that the breaker operation produces the expected current interruption pattern and that the model returns to a plausible steady state. Make students write each check as a statement they could apply again, not as a one-off calculation.

Reporting rubrics should also enforce traceability. Students should record solver settings, timestep choices, and key model assumptions in plain language. Marks should go to clear plots with labelled axes, a short explanation of why the plot answers the original system question, and a note about one limitation of the model. That combination builds engineers who can defend results under review, not students who can only reproduce a screenshot.

Avoid common mistakes that make simulation results misleading

Misleading simulation results usually come from hidden assumptions, weak validation, and overconfident interpretation. Students will trust a clean waveform even when the model is wrong, so teaching must put friction on that impulse. The fix is procedural: force explicit assumptions, demand basic checks, and grade explanations as hard as plots. Over time, that discipline becomes part of how students think.

Watch for a few predictable failure modes. Ideal sources and missing losses can produce unrealistically stiff behaviour, so require students to justify source impedance and load models. Poor initial conditions can fake a transient that looks like a fault response, so require an operating point check before any event. Solver settings can hide oscillations or create false ones, so require students to state timestep and tolerance choices and to rerun one case with tighter settings as a confidence check.

The most important judgement is simple: simulation is a teaching lab only when students can explain why the model behaves as it does, and when they can show basic evidence that it is not lying. SPS SOFTWARE fits that mindset when you use its transparent models to keep assumptions visible and debuggable, but the habit matters more than the platform. Keep simulation disciplined, and you’ll graduate engineers who trust results for the right reasons.