Key Takeaways

- Define the study question first, then match tool fidelity and outputs to that goal so results stay explainable and defensible.

- Choose EMT or RMS based on the time scales and physics you must capture, since the wrong modelling approach will produce confident-looking but wrong answers.

- Prioritize transparent models, solver stability, and repeatable workflows over feature count so teams and students can rerun, review, and trust the same cases.

Pick your simulation tool by matching study goals to model fidelity, solver behaviour, and workflow fit.

“Tool selection goes wrong when you start with a feature checklist instead of the question you need answered, the time scales you must resolve, and the outputs you must trust.”

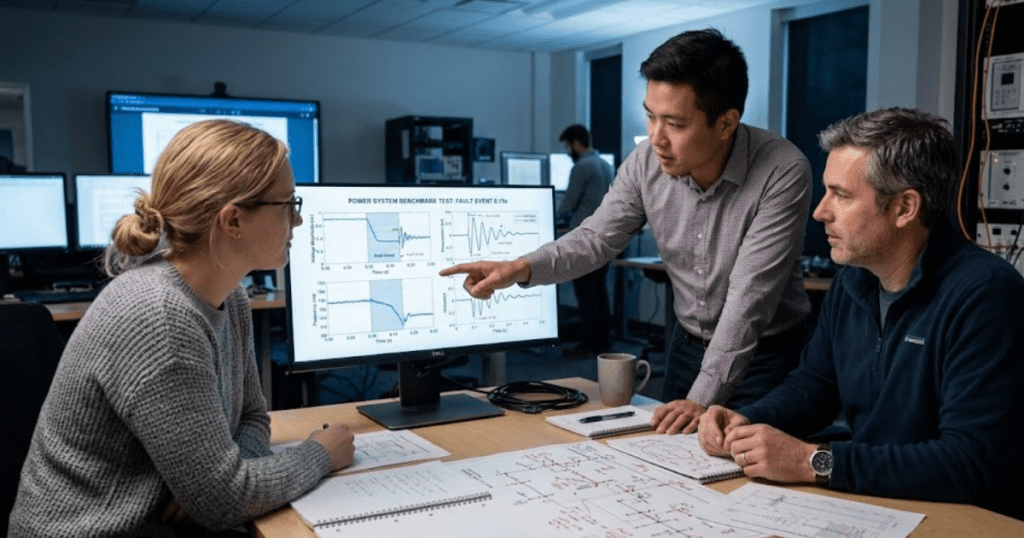

Teaching needs transparency so students can see why waveforms change, not just that they change. Engineering needs repeatable results that stay stable across parameter sweeps, model updates, and handoffs. A Nature survey reported 70% of researchers tried and failed to reproduce another scientist’s experiments, which is a reminder that repeatability is a technical requirement, not a nice-to-have.

A useful electrical simulation tools comparison treats accuracy, usability, and governance as a single package. You’re choosing assumptions, numerical methods, and model transparency, not just a user interface. You also need a plan for adoption in a teaching lab or an engineering team, since licensing, version control, and model review habits will shape results over time. The best power system simulation software is the one that makes your modelling assumptions visible and controllable, so you can explain results and defend them.

Start with study goals and required simulation fidelity

Your first evaluation step is writing down the study question, the events you must represent, and the outputs you will judge as correct. Fidelity is not “high” or “low”; it is a match between time scale and physics. If you cannot state what must be captured, you will overbuild models or miss key behaviours.

Start with three decisions you can document in a few lines: what phenomena matter, what you will ignore, and what error you can accept. Teaching and engineering differ most in what “good” means. A teaching lab often prioritizes clarity, inspectable component equations, and fast setup so students spend time learning, not wrestling with tool friction. Engineering work prioritizes traceability, model review, and stable runs across many cases, because a single unstable run can invalidate a whole set of conclusions.

A concrete way to lock this down is to define a “reference run” and a “stress run” before you install anything. A protection course might set a reference run as a 12.47 kV feeder fault with a grid-following inverter and a simple relay logic check, then use a stress run that tweaks fault resistance and inverter current limits to see if the results stay consistent. Once those two runs are written, every tool trial becomes measurable rather than impression-based.

Compare EMT and RMS approaches for power system modelling

The main difference between EMT and RMS simulation is what the solver treats as an electrical state versus an averaged approximation. EMT modelling resolves fast electromagnetic transients and switching effects with small time steps. RMS modelling focuses on slower electromechanical dynamics and phasor quantities, so it runs longer time horizons with less computational load.

EMT is the right lens when your question depends on waveform shape, fast controls, converter switching behaviour, protection interactions tied to instantaneous values, or harmonics. RMS is the right lens when your question depends on longer-duration voltage and frequency behaviour, stability margins, or operating-point changes where waveform detail does not change the answer. Neither approach is “better” in general, and both can produce misleading confidence if used outside their valid assumptions.

During tool evaluation, look past marketing terms and ask what the platform actually solves, how it initializes states, and what it assumes about network frequency and balance. A tool can offer both approaches, but you still need to check how models transition between time scales and what signals are available for verification. A practical selection habit is to decide EMT or RMS first, then shortlist tools that do that job cleanly, because forcing a tool into the wrong study type is a common source of wasted modelling time.

Check libraries for converters, protection, feeders, and control logic

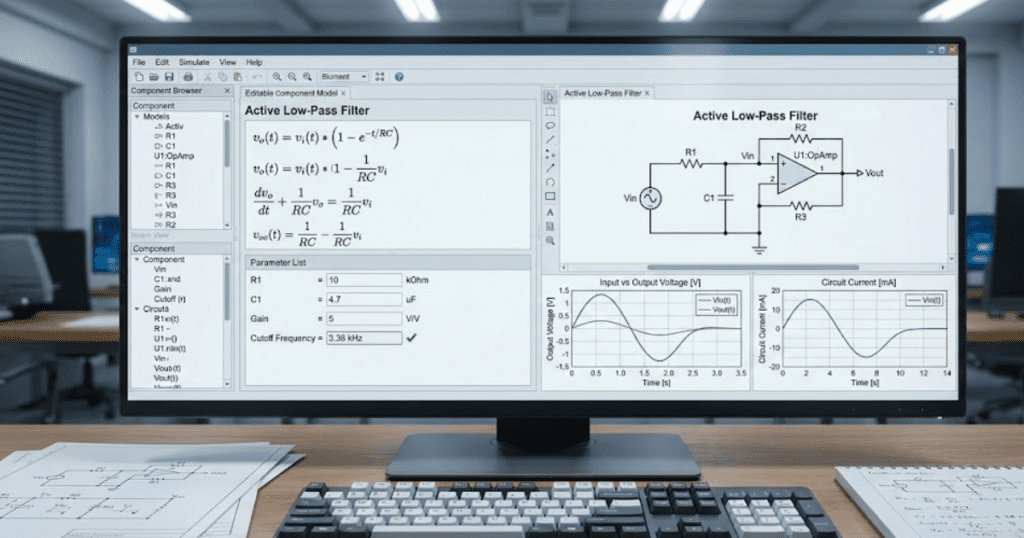

Library coverage matters when it reduces custom modelling effort without hiding physics behind locked blocks. You want component models that match your study goals, expose parameters that affect behaviour, and provide enough documentation to review equations and assumptions. Library breadth also matters only if the models are consistent and easy to audit.

Converter-heavy grids raise the stakes for this check. A global electricity review reported renewables produced 30% of global electricity in 2023, which means many studies now depend on inverter controls, limits, and protection coordination rather than only synchronous machine dynamics. If the library models hide current limiting, phase-locked loop behaviour, or control saturation, you will get clean-looking plots that do not match field behaviour.

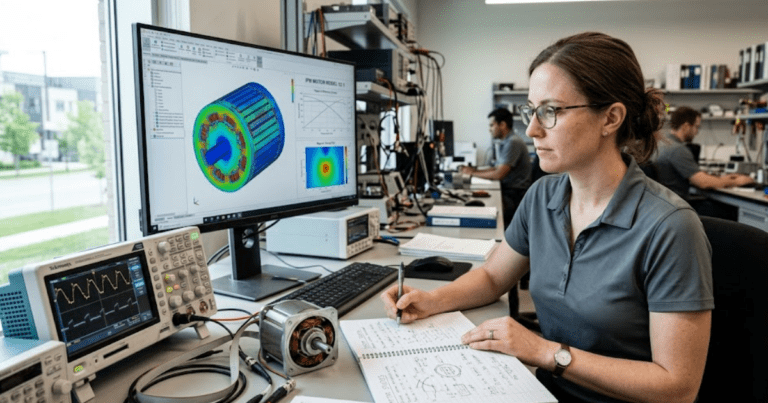

For teaching, model transparency is part of the curriculum. Students learn faster when they can inspect a control loop, change a filter value, and connect that change to waveform effects without guessing what a block does. For engineering, transparency supports peer review and reduces handoff risk between teams. You should also check how protection and control logic is represented, since the tool’s modelling style will shape how you validate timing, thresholds, and state transitions.

Assess solver settings, numerical stability, and reproducible results

“Solver quality shows up as stable runs, clear diagnostics, and repeatable results across small parameter changes.”

You should be able to control time step or tolerances, understand convergence limits, and reproduce a run from saved settings and model versions. If the platform cannot explain why a run failed, you will spend more time debugging than studying.

Numerical stability is not only a “solver problem”; it is a modelling discipline problem you need tool support for. Stiff networks, tight control loops, discontinuities, and ideal switches all push solvers into edge cases. Good platforms help you manage this with clear event handling, sensible defaults you can override, and warnings that point to the underlying cause. Reproducibility also includes governance basics: storing solver settings with the model, tracking library versions, and keeping run metadata so two engineers can confirm they ran the same case.

| What you test during a trial | What good behaviour looks like | What breaks if you skip it |

| You run the same case twice with identical settings. | The results match within a stated tolerance and the tool records key settings. | You cannot tell tool variance from system behaviour changes. |

| You vary time step or tolerances across a small range. | Trends stay consistent and any differences are explainable and bounded. | Plots look plausible but depend on numerical artefacts. |

| You test initialization from a steady operating point. | Start-up transients are controlled and initial conditions are inspectable. | Early transient behaviour contaminates protection and control results. |

| You force a hard event like a fault or breaker action. | The solver reports events clearly and recovers without silent instability. | Hidden discontinuities create non-physical oscillations or solver failure. |

| You inspect diagnostics after a failed or slow run. | Error messages point to elements, time ranges, or limits you can adjust. | Debug time grows and model trust drops across the team. |

Evaluate MATLAB Simulink links, collaboration, and lab deployment

Workflow fit is the difference between a tool that gets used and a tool that sits idle after procurement. You should check how the platform exchanges data with MATLAB and Simulink, how it supports parameter sweeps, and how it packages models for sharing. Lab deployment also needs predictable installs, licensing clarity, and version consistency across machines.

Integration checks should focus on what you will actually do day to day: import and export of parameters, scripted runs, and clean interfaces for controls work that lives outside the power network model. Collaboration checks should focus on model review and change tracking, since simulation credibility depends on being able to explain what changed and why results moved. Teaching labs add another constraint: students need to get running quickly with minimal configuration drift between workstations, or the course becomes an IT exercise.

SPS SOFTWARE is often evaluated in this step because teams want open, editable component models paired with a workflow that fits MATLAB and Simulink based control design. That practical combination matters when you need both transparency for learning and consistent execution for engineering studies. Tool trials should include a short “handoff test” where one person creates a case and another person reruns it from scratch using only the shared package, since that exposes hidden dependencies early.

Build a scoring rubric for electrical simulation tools comparison

A scoring rubric turns tool selection into a repeatable choice you can defend to a lab director or engineering manager. Start with a few non-negotiables tied to your study goals, then score the rest with weights that reflect how often you will use each capability. A good rubric also forces you to document tradeoffs instead of debating preferences.

Keep the rubric short enough that you will actually use it after the first meeting. These five categories cover most selection work without losing technical detail:

- Study fidelity fit based on EMT or RMS needs

- Model transparency and inspectable equations and parameters

- Library coverage aligned to your network and control scope

- Numerical robustness and reproducibility across reruns

- Workflow and deployment fit for labs and teams

Judgment comes from how the scores behave under pressure, not from a perfect spreadsheet. If a tool wins only when you give it generous weights on minor features, it will fail you later when schedules tighten and you need dependable runs. When you apply this rubric consistently, SPS SOFTWARE tends to show its value where transparent modelling and reproducible execution matter most, which is the part of tool choice that determines long-term trust in results. The goal is not a tool with the longest feature list; it is a tool you can explain, rerun, and defend.