Key Takeaways

- Short circuit analysis works best when you choose the method from the protection question instead of starting with the fullest model available.

- Three phase faults, sequence networks, and zone based case selection each answer different protection questions, so none of them should be treated as optional shortcuts.

- Credible settings come from disciplined validation of data, models, and fault results against plant evidence.

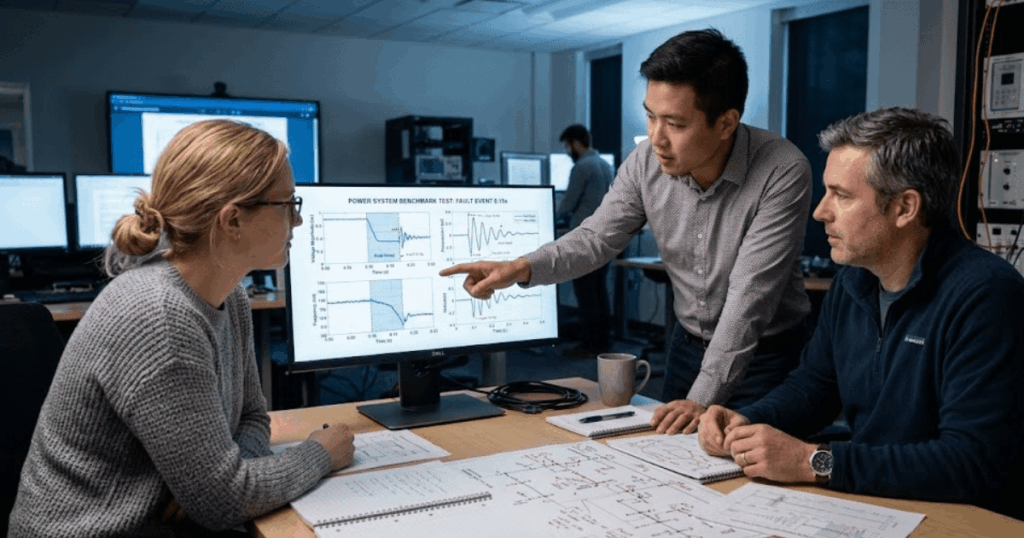

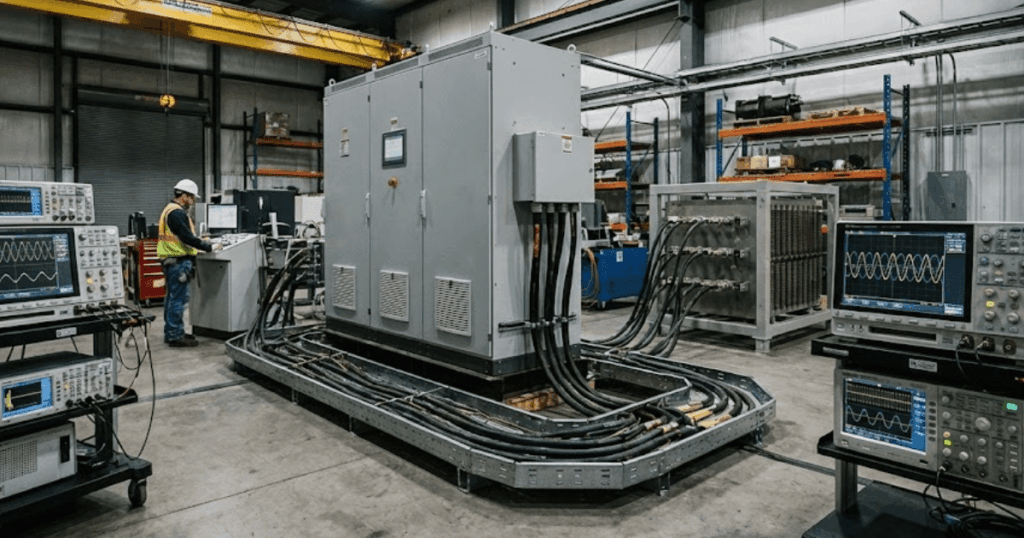

Accurate short circuit analysis keeps relay settings credible and equipment duties honest.

Protection work goes wrong when engineers treat fault analysis in power systems as a one-step calculation instead of a checked chain of assumptions. U.S. electricity customers were without power for an average of 5.5 hours in 2022, which shows how much system performance matters when a fault is cleared poorly or studied badly. You need a method that fits the duty under review, the network detail you trust, and the relay function you’re checking. Short circuit analysis in power systems works best when you start with the protection question, then pick the simplest method that still captures the fault behaviour that matters.

Study scope determines the right short-circuit method

The right short-circuit method depends on what the study must prove. A breaker duty check needs maximum available current. A relay sensitivity check needs the weakest fault that still must trip. Scope comes first because one network can require different assumptions for each task.

A plant expansion shows the difference quickly. A new 15 kV motor bus can need one study for switchgear interrupting duty, another for feeder ground relay pickup, and a third for incident energy. You can’t use the same fault set for all three jobs and expect useful answers. The method is only right when its assumptions line up with the setting or rating you have to approve, so the first step in fault analysis is always defining the protection decision that rests on the result.

“Scope comes first because one network can require different assumptions for each task.”

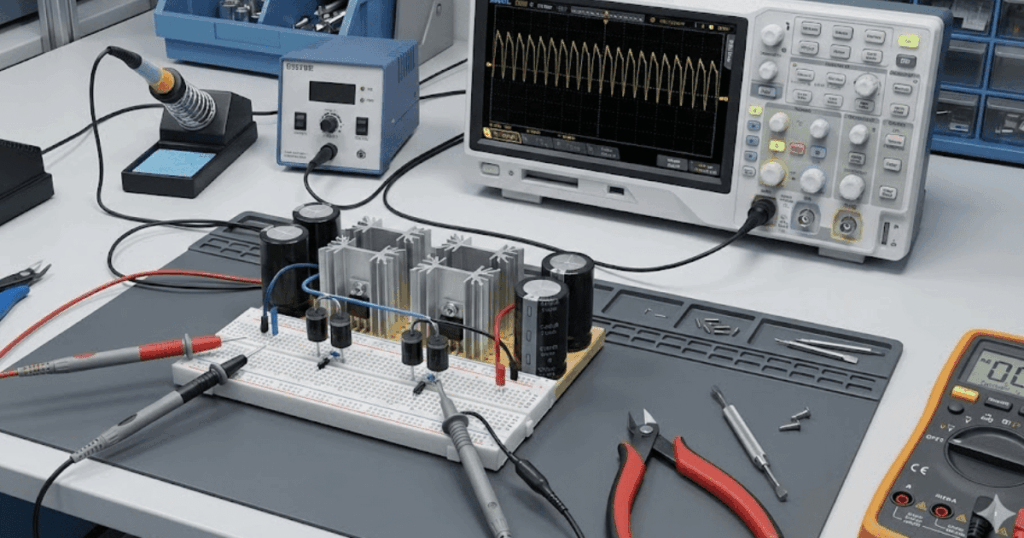

Network reduction keeps hand calculations useful for first checks

Network reduction still has value because it gives you a fast truth check. A Thevenin equivalent at the fault point shows source strength. It also shows X/R ratio and likely fault level. You don’t need the full model to test first assumptions.

A feeder relay review often starts with the utility source, one transformer, one cable run, and the equivalent motor contribution behind the bus. That stripped network will tell you if expected fault current is closer to 2 kA or 20 kA, and that gap matters before you trust any detailed case file. A reduced model also shows when a result doesn’t make physical sense. Once the order of magnitude looks right, you can move to fuller models for protection coordination and equipment checks with much more confidence.

Three-phase faults set the upper bound for duty

Three-phase faults matter because they usually produce the highest current. They set the largest mechanical stress on equipment. They also set the main thermal limit for interruption. That makes them the standard starting point for breaker duty and bus checks.

A 27.6 kV industrial substation makes the point clearly. A fault placed at the main bus can show the strongest symmetrical current the source and motors can supply, while a ground fault on a remote feeder will often be much lower. The larger case governs breaker interrupting rating and bus bracing. Symmetrical fault analysis is simple compared with asymmetrical studies, yet it answers the first hardware question protection engineers face: can the equipment interrupt the strongest fault the system will deliver?

| When you need this answer | Start with this method |

|---|---|

| A switchgear duty review needs the highest current a bus can see. | A balanced three phase bus fault gives the first current limit for interrupting checks. |

| A ground relay pickup review needs the weakest fault that still must trip. | A single line to ground study with sequence networks shows the zero sequence path that controls sensitivity. |

| A distance relay reach review needs apparent impedance along one protected line. | Fault cases placed at several points on that line show how source split alters the relay view. |

| A coordination review needs current over a practical range of source conditions. | RMS fault studies at minimum and maximum source strength show timing margins that survive operating changes. |

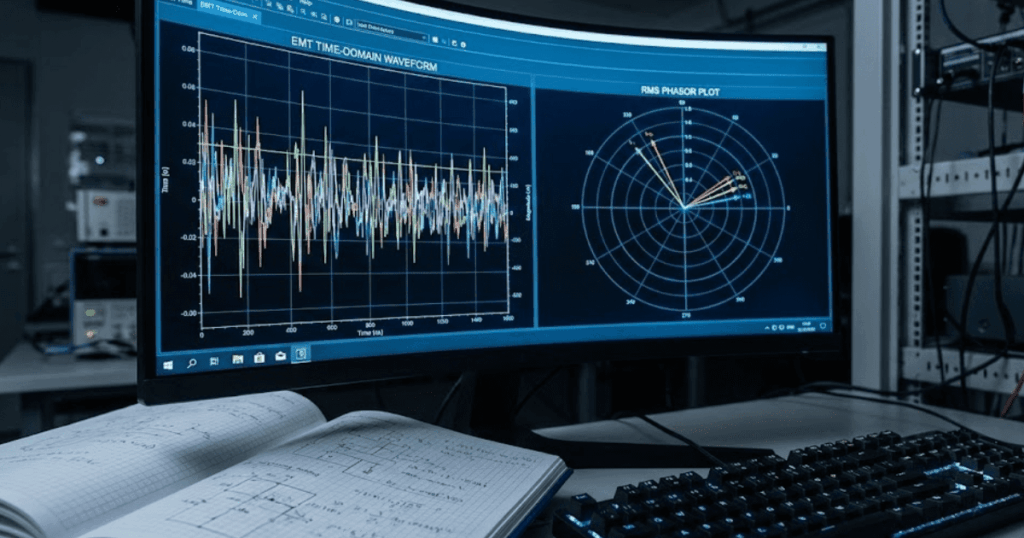

| A feeder with several converters needs current shape and control response. | An EMT model shows current limiting and first cycle effects that RMS tools smooth out. |

Sequence networks remain essential for unbalanced fault studies

Sequence networks remain the clearest way to study unbalanced faults. They separate positive, negative, and zero sequence paths. That split shows why ground fault current rises or collapses for the case under study. Asymmetrical fault analysis becomes useful only when those paths are modelled correctly.

A grounded wye to delta transformer between a utility source and a plant feeder makes this visible. A single line to ground fault on the delta side won’t pass zero sequence current back to the source the same way a grounded wye to grounded wye bank will. Negative sequence current still matters for machine heating and phase unbalance, but zero sequence current will decide how ground elements behave. Engineers who skip sequence networks often end up with ground relays that look generous on paper and blind on the actual feeder.

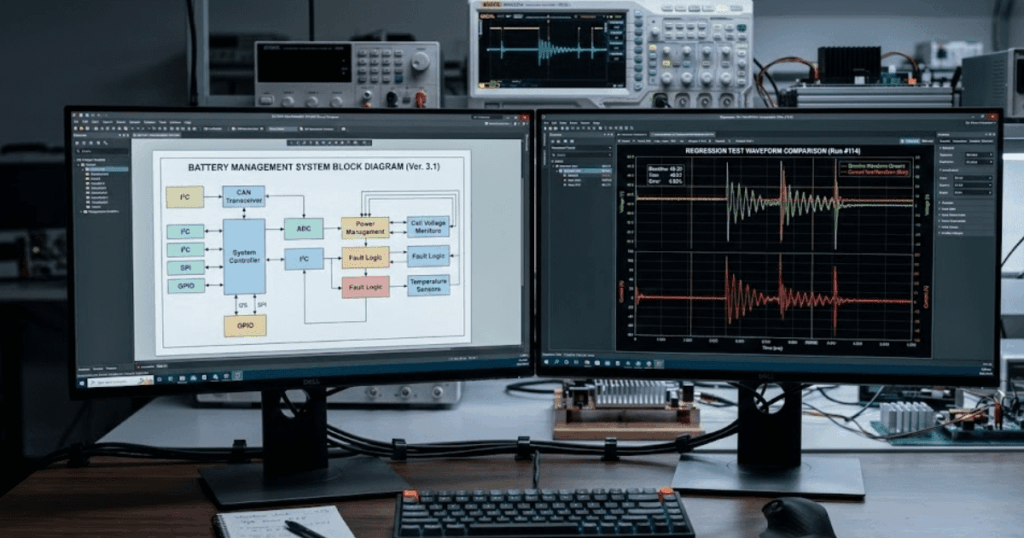

Data quality errors usually outweigh calculation method errors

Bad data will distort fault results more than the difference between sound methods. Wrong transformer impedance shifts calculated current. Missing motor contribution can change minimum fault values. Protection settings sit on small margins, so data quality has to come first.

Protection system misoperations were reported at a 6.5% rate on the bulk power system in 2023, which is a reminder that settings and models still fail under routine operation. A common plant study error comes from using transformer nameplate impedance on the wrong MVA base, which distorts both maximum and minimum fault levels. Another comes from leaving out local motor contribution after a site expansion. Those errors deserve attention before you refine relay curves.

- Source short circuit level and X/R ratio match the latest utility data.

- Transformer impedance is converted to the study base correctly.

- Grounding method is modelled at every source and transformer.

- Motor and converter contribution is included where it matters.

- Instrument transformer ratios match the relay inputs and settings.

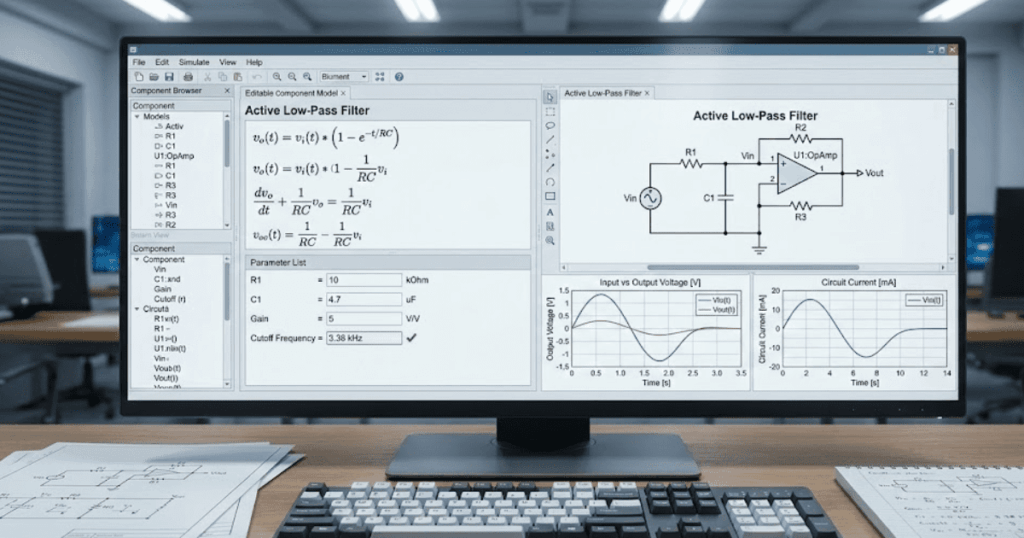

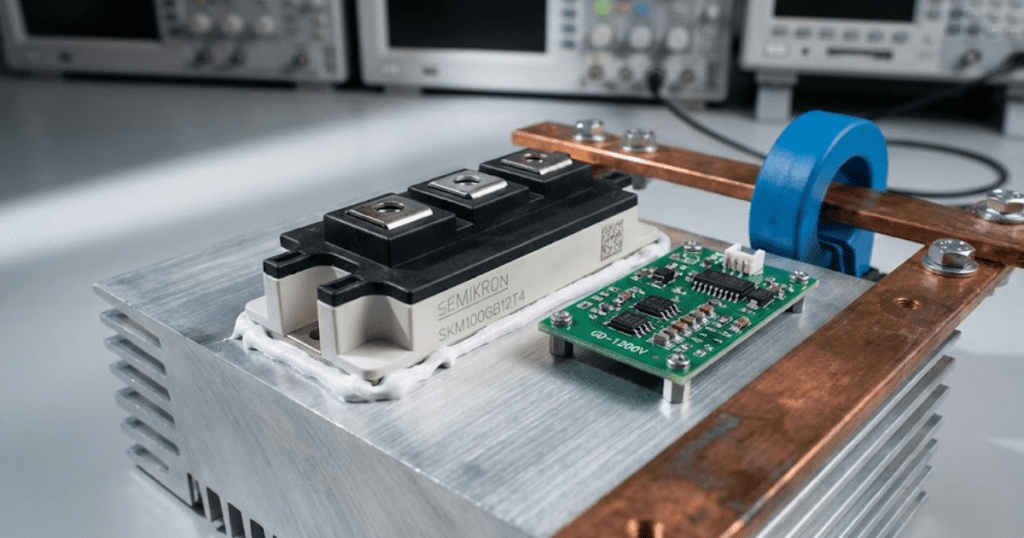

RMS tools suit steady fault levels better than EMT

RMS tools are best for steady fault levels and most coordination work. EMT tools are better when wave shape and control action matter. The time scale of the protection question should pick the method. That keeps the model focused and the result usable.

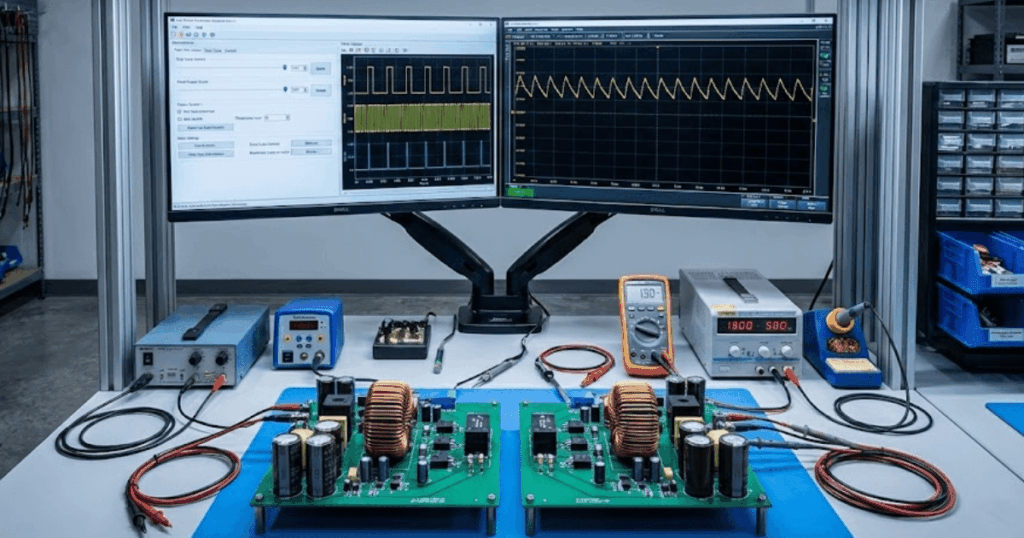

A feeder with several converters shows the split clearly. An RMS study can estimate current magnitude seen by time overcurrent elements across many contingencies, which keeps coordination work efficient. An EMT study becomes important when inverter current limiting, control delays, or current reversal can affect protection logic during the first cycle. SPS SOFTWARE is useful in that stage because transparent models let you inspect the assumptions behind source impedance, converter limits, and relay inputs instead of treating the result as a sealed output. You’ll get better answers when you reserve EMT detail for cases where transient behaviour actually changes the protection outcome.

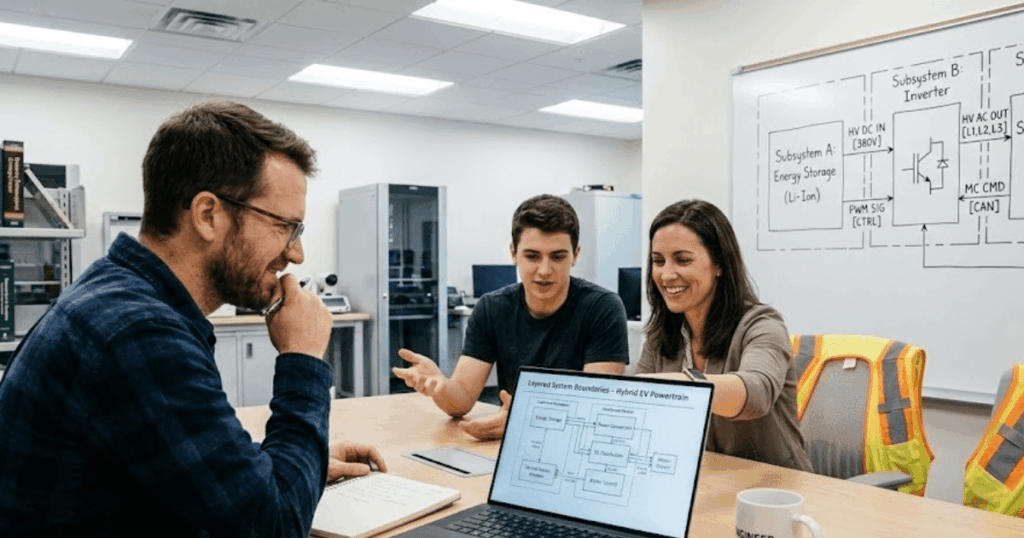

Protection checks should start from zone-based fault cases

Protection checks work best when fault cases follow protection zones. Each zone needs internal and external faults. Each zone also needs strong and weak source conditions. That structure ties short circuit analysis directly to what the relay has to judge.

A distance relay on a transmission line needs faults placed at several points on the protected line, with source strength varied at each end. A feeder overcurrent element needs near faults for speed and remote faults for sensitivity. Differential protection needs internal faults plus through faults that stress restraint and current transformer performance. When you organize cases by zone, gaps show up quickly, and you won’t mistake a complete bus fault report for a complete protection study.

“Matching study results to field evidence turns fault analysis into dependable protection practice.”

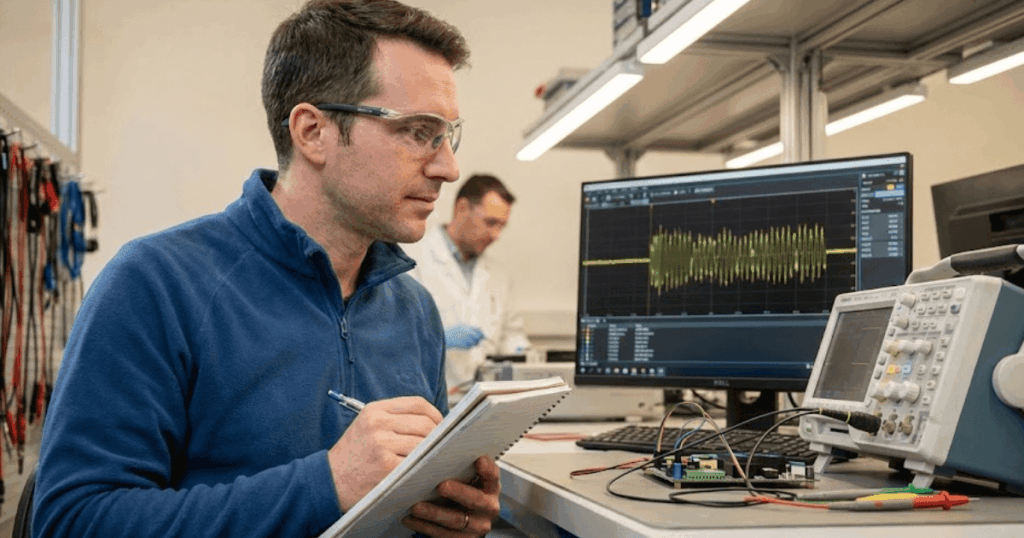

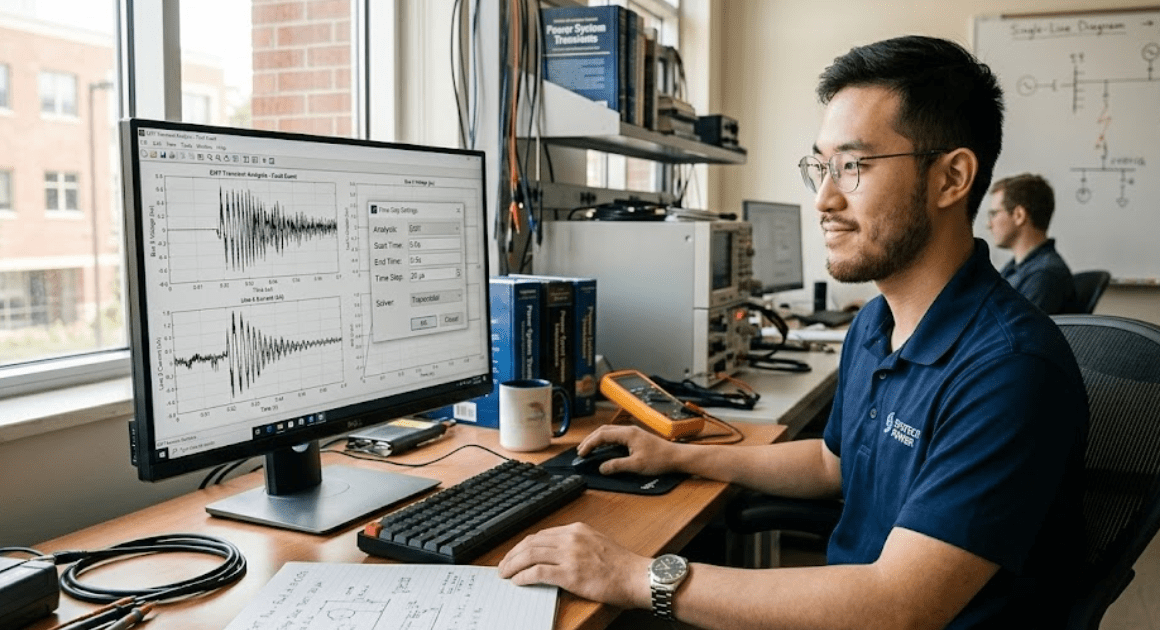

Settings are credible only after results match plant data

Settings become credible only when calculated faults agree with plant evidence over time. Relay event files should support the study. Commissioning tests should support it too. Matching study results to field evidence turns fault analysis into dependable protection practice.

A mismatch always means something needs attention. It’s often a grounding connection modelled incorrectly, a motor block omitted from the study, or a relay using different current transformer ratios than the file says. Engineers who keep closing that loop build settings that stay stable through outages, expansions, and audits. SPS SOFTWARE fits that discipline well because transparent models make it easier to trace a result back to the parameter or assumption that created it. Credible protection work comes from checked models, checked data, and checked results, repeated until the network and the relay tell the same story.