Key Takeaways

- Interoperability matters because it keeps model intent stable as work moves across toolchains.

- Data alignment and disciplined system exchange keep parameters, units, and results reproducible across teams.

- Workflow clarity through ownership, versioning, and interface checks reduces rework and late-stage failures.

Physical system modelling breaks down when model intent, data, and interfaces shift as work moves across tools and groups. Interoperability matters because it keeps the meaning of your model stable as it’s edited, exchanged, and verified, so results stay traceable and engineering decisions stay defensible. A cost analysis of interoperability gaps estimated about $15.8 billion per year in avoidable costs for the U.S. capital facilities industry.

Teams often treat interoperability as file conversion, but the bigger risk is semantic drift. Parameters get reinterpreted, units get assumed, signals get renamed, and “the same” subsystem starts behaving like a different one. Strong interoperability practices keep models understandable across toolchains and over time, with fewer surprises during commissioning, lab validation, and design reviews.

“Interoperability turns a model into an asset your whole team can trust.”

Interoperability in physical system modelling means consistent model intent

Interoperability means the model you hand off keeps the same intent when someone else runs it. Intent includes the physical scope, operating point, required fidelity, and stated assumptions. When intent is consistent, a model remains interpretable across toolchains, and results stay comparable across studies.

Start with an explicit model contract that lives with the model, not in someone’s head. That contract states what the model represents, what it omits, and what “correct” looks like in terms of outputs and limits. It also defines sign conventions, reference directions, and initial conditions so downstream users don’t silently reverse meaning. Model intent also needs a clear boundary between physics and control so interface signals stay stable.

Intent discipline reduces debates that waste cycles in reviews, because reviewers can check purpose and assumptions before arguing about waveforms. It also stops well-meaning edits from turning one study model into a different study model under the same file name. When model intent is stable, the remaining interoperability work becomes mechanical rather than interpretive.

Toolchain compatibility reduces rework when models move between teams

Toolchain compatibility matters because most modelling work is collaborative and staged, not done in one tool by one person. When models move cleanly across toolchains, teams spend time improving physics and controls instead of rebuilding blocks, retesting, and revalidating results that already existed in another format.

Compatibility starts with choosing representations that survive exchange, like clear component boundaries, explicit interfaces, and parameter sets that don’t depend on hidden tool defaults. File formats matter, but compatibility also covers solver assumptions, initialization rules, and how events are handled. A model that relies on undocumented default tolerances will behave differently after exchange, even if the topology looks identical.

Tradeoffs are real. The most portable representation can limit access to tool-specific features, while a tool-optimized model can lock you into one workflow. Good teams separate “study models” from “implementation models,” then agree on where fidelity must match and where it can differ, so compatibility work stays focused on the parts that affect results.

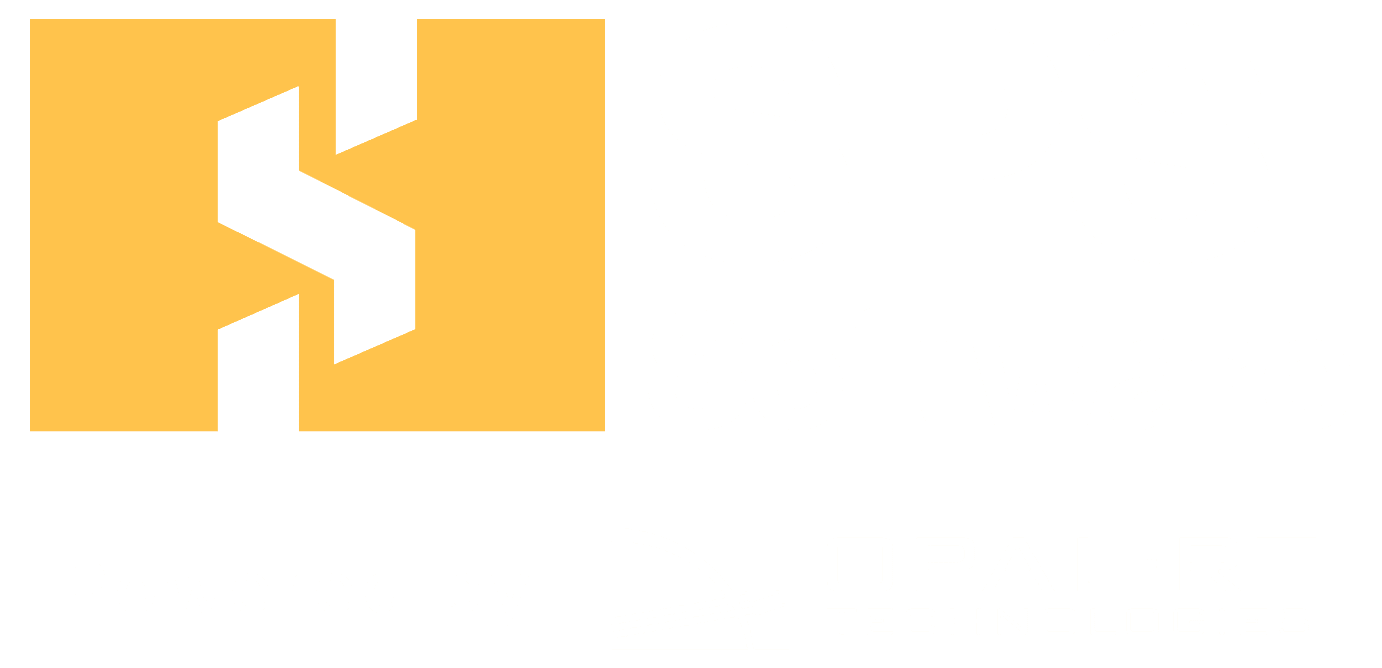

Data alignment keeps parameters, units, and signals consistent everywhere

Data alignment keeps the numbers in your model from changing meaning when they cross a boundary. Units, scaling, naming, and signal definitions need to be consistent across tools, spreadsheets, scripts, and reports. When alignment is weak, teams can get the “right” plots for the wrong reasons, then discover the mismatch late.

A clear illustration is how unit handling can decide outcomes even when equations are correct. A unit mismatch contributed to the loss of a $125 million spacecraft, after one system produced values in imperial units while another assumed metric. Modelling teams face the same class of failure when a parameter table uses one base unit set and the simulation assumes another.

Alignment improves workflows when you treat data as a product with validation rules. Unit metadata should be attached to parameters and signals, not implied. Names should be stable and descriptive, and scaling should be explicit at interfaces so values don’t get “fixed” with hidden gains. Once data alignment is consistent, debugging shifts from chasing conversions to checking actual system behaviour.

System exchange needs common interfaces for models, results, and metadata

System exchange works when you share more than a model file. Teams need a common package that includes the model, its parameter sets, run configuration, and the minimum metadata required to reproduce results. Without that package, exchanges turn into “it runs on my machine” arguments.

Define what gets exchanged at each handoff and keep it consistent. The exchange package should include interface definitions, parameter dictionaries, unit annotations, initialization settings, and a small set of expected outputs used as acceptance checks. Results matter too: a baseline run with logged signals helps the receiving team confirm they’re running the same system, not a lookalike.

Execution improves when the exchange format matches how people actually review work. SPS SOFTWARE users, for instance, tend to benefit from exchange packages that keep component equations inspectable and parameter values traceable, because reviewers can verify intent without guessing what’s inside a closed block. That same idea applies in any toolchain: shared artefacts should support inspection, reproduction, and controlled change.

| What you standardize for exchange | What stays consistent after a handoff |

|---|---|

| Interface signals with names, units, and sign conventions | Teams interpret inputs and outputs the same way across tools. |

| Parameter sets stored as versioned dictionaries | Runs stay reproducible even after tuning and refactoring. |

| Initialization rules and operating points | Start-up behaviour matches, so early transients remain comparable. |

| Run configuration including solver assumptions and tolerances | Numerical differences don’t get mistaken for physics differences. |

| Baseline results with agreed acceptance signals | Recipients can confirm equivalence before adding new work. |

| Metadata stating scope, omissions, and validity limits | Models don’t get reused outside the conditions they were built for. |

Workflow clarity comes from explicit ownership, versions, and handoffs

Workflow clarity prevents interoperability work from turning into personal knowledge. Clear ownership, versioning rules, and handoff points make it obvious who can change what, when changes are reviewed, and how a model gets promoted from draft to trusted. That clarity is what keeps multi-team modelling from fragmenting.

Make handoffs explicit and lightweight, then treat them as part of engineering practice. Ownership should cover both model structure and data tables, since either can break a study. Version identifiers should link model changes to study outcomes, so a surprising result can be traced back to a specific edit. Handoffs should include a short acceptance check so the receiver confirms equivalence before building on top.

- Assign one owner for interfaces and one owner for parameter data.

- Tag every shared model with a version and a short change note.

- Use a fixed handoff checklist that includes units and sign checks.

- Store baseline run outputs with the model, not in personal folders.

- Require review before interface signals or parameter names change.

These rules reduce rework because they shrink the space where silent changes can hide. They also make collaboration safer for students and new engineers, since expectations are written down. Clear workflows won’t remove technical disagreements, but they will keep disagreements focused on engineering rather than archaeology.

Checks that prevent failures when linking physics and control models

Linking physics and control models fails in predictable ways, and a small set of checks prevents most of them. The goal is consistency across domains, not perfect modelling. Interface checks, unit checks, and regression checks catch mismatches early, before teams spend weeks tuning a controller against a miswired plant model.

Start with interface checks that treat every boundary as a contract. Inputs and outputs should have expected ranges, units, and steady-state values under a known operating point. Add regression checks that rerun a small baseline case after any structural change and compare key signals within agreed tolerances. Include numerical sanity checks too, since step size, event handling, and initialization can change stability and damping without any physics change.

“Interoperability is not a separate workstream from model quality; it is model quality.”

Teams that practise disciplined checks get faster agreement, clearer reviews, and fewer late-stage surprises when work leaves the original author’s toolchain. SPS SOFTWARE fits well when you want transparent, inspectable models to support those checks, because inspection reduces guesswork and helps teams converge on shared understanding.