Key Takeaways

- Wrong study scope and wrong model detail create errors long before solver output appears.

- Base quantities, source data, load behaviour, and control limits shape result accuracy more than most teams expect.

- Model trust comes from repeatable checks against known conditions, not from tidy plots or complex schematics.

Most wrong power system simulation results come from setup errors, not math errors.

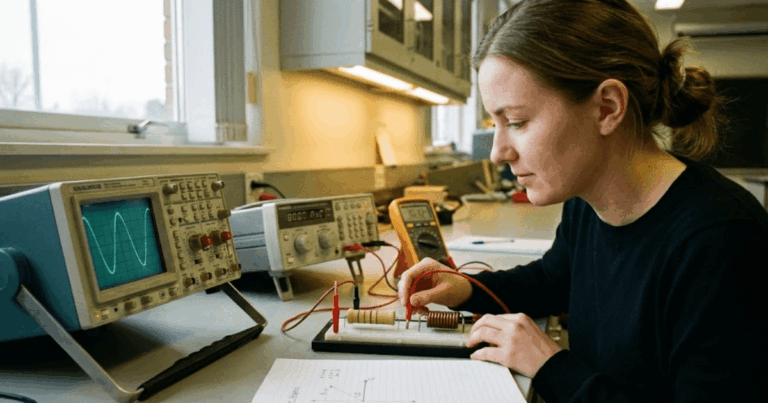

Engineers trust a power system simulator when the model reflects the study question, the data, and the operating limits that shape system behaviour. Trouble starts when a convenient template replaces a verified network model or when a stable waveform hides a bad assumption. You’re usually not dealing with a software failure. You’re dealing with a model that answered a different question than the one you meant to ask.

The 8 mistakes that distort power system simulation results

A power system model loses accuracy when its structure, data, or numerical settings do not match the study objective. Each mistake below creates a specific kind of error, and each one can be checked early before you spend hours trusting results that won’t hold up.

“Engineers trust a power system simulator when the model reflects the study question, the data, and the operating limits that shape system behaviour.”

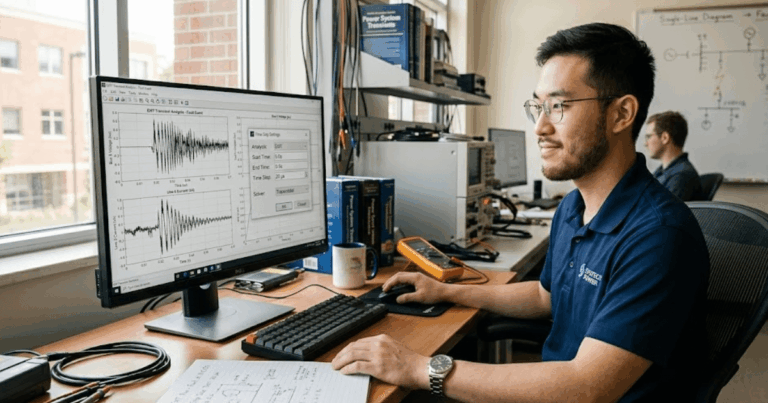

1. Using a study model that does not match the question

A model must match the time scale and physics of the question you’re asking. A steady-state load flow will show bus voltages and line loading, but it won’t tell you how a relay timer responds or how converter current peaks in the first milliseconds of a fault. A common miss appears when an averaged inverter model is used to judge sub-cycle current stress during a breaker operation. That result will look clean, yet it hides the switching and control detail that actually matters. If the study scope is vague, the model becomes a compromise and your answers lose value.

2. Mixing per unit bases across the network model

Per unit errors quietly distort almost every calculated quantity in a network study. Trouble often starts around transformers, where engineers carry a 100 MVA base through one section and a different base through another without converting impedances. A 13.8 kV to 69 kV transformer is a common place for this slip, because the voltage base shifts and the impedance looks reasonable even when it is not. The model still runs, which makes the mistake easy to miss. Short-circuit levels, voltage drops, and machine currents then look believable while every downstream result is biased.

3. Reusing default load models without checking behaviour

Default load blocks are useful for setup speed, but they often hide the wrong electrical behaviour. A constant power load can be acceptable for a planning snapshot, yet it will misrepresent voltage recovery if the actual site has induction motors, heating loads, or mixed feeder demand. A motor-heavy industrial bus will pull current very differently after a sag than a static constant power block suggests. That difference affects fault recovery, motor stalling, and protection pickup. If you don’t check how the load model reacts to voltage and frequency changes, the study will tell a neat story about a system that doesn’t exist.

4. Estimating source strength without verified grid data

Source strength shapes fault current, voltage stiffness, and control interaction, so guessed values will corrupt the whole model. Engineers often plug in a short-circuit level from memory or reuse data from a nearby substation and assume the upstream grid is close enough. A weak connection point for a wind plant, for instance, will behave very differently from a strong urban feeder with the same nominal voltage. Converter stability, flicker response, and fault current all shift when the Thevenin equivalent is wrong. If you haven’t verified source impedance and X/R ratio, you haven’t verified the study.

5. Picking a solver step that misses fast events

Numerical settings matter as much as network data when the study includes fast transients. A solver step that works for a slow voltage profile won’t capture capacitor energization, converter commutation, or a breaker restrike. You’re likely to miss the very spike or oscillation you set out to inspect if the time step smooths it away. That problem shows up when current peaks look modest and switching waveforms appear unusually clean. The model is not calm in that case. The solver is simply averaging out behaviour that occurs between samples, and your protection or insulation assessment will be wrong.

6. Starting dynamic studies from an invalid operating point

Dynamic results are only credible when the starting point is physically consistent. A common error appears when generator dispatch, tap positions, or control references are entered manually and the model begins from a state that could never exist in normal operation. A synchronous machine might start with an exciter output beyond its limit or with terminal voltage that doesn’t match the solved network condition. Once the disturbance is applied, you can’t tell which oscillation came from the event and which came from the bad initialization. The waveform looks busy, but it reflects startup correction rather than system response.

7. Leaving control limits outside the simulation model

Control systems need their limits inside the model or the results will overstate stability and recovery. Engineers sometimes model the main controller and skip current clamps, saturation, deadbands, rate limits, or protection interlocks because the core loop seems more important. A grid-forming inverter, for example, will appear heroic during a voltage dip if its current ceiling is missing. The same happens with exciters and governors when minimum and maximum outputs are left out. The controller then produces elegant responses that no physical device can sustain. If a control action looks perfect, check the limits first because something important often isn’t there.

8. Trusting results before any independent model check

A model should earn trust through simple checks before it is used for deeper studies. Engineers skip this step when the one-line diagram is complete and the waveforms look tidy, but appearance is a poor test. A feeder model should reproduce known voltages, losses, and fault levels before you use it for contingency work. A transparent workflow matters here, and SPS SOFTWARE is useful in that context because you can inspect assumptions, parameters, and equations instead of treating the power system simulator as a sealed box. If the base case fails a basic check, every later scenario will carry the same error.

“If the base case fails a basic check, every later scenario will carry the same error.”

| Model issue | What the result is really telling you |

|---|---|

| 1. Using a study model that does not match the question | The output reflects the wrong time scale or device detail, so the answer does not fit the study goal. |

| 2. Mixing per unit bases across the network model | Reasonable-looking values can still be wrong when base conversions are inconsistent across voltage levels. |

| 3. Reusing default load models without checking behaviour | Static defaults can hide how actual site loads react during sags, recovery, and frequency shifts. |

| 4. Estimating source strength without verified grid data | Guessed grid impedance shifts fault current and voltage stiffness enough to distort the whole study. |

| 5. Picking a solver step that misses fast events | Clean plots can come from numerical smoothing rather than from a physically quiet system response. |

| 6. Starting dynamic studies from an invalid operating point | Early oscillations often come from bad initialization rather than from the event you intended to test. |

| 7. Leaving control limits outside the simulation model | Controllers look stronger than they are when current, voltage, and rate limits are missing. |

| 8. Trusting results before any independent model check | Base-case checks catch bad assumptions long before scenario studies make them harder to spot. |

How to check model credibility before you trust results

A credible model reproduces known operating conditions, respects device limits, and gives stable answers under simple cross-checks. You should be able to explain every major assumption in plain language. If you can’t trace a result back to verified data and model structure, more detail won’t rescue it.

- Match the model type to the study time scale.

- Recheck every base quantity across transformers.

- Compare load response against site knowledge.

- Validate source impedance with utility data.

- Confirm the base case before any disturbance study.

That review habit is what separates a useful engineering model from a polished diagram. Teams that keep assumptions visible, test simple cases first, and question clean-looking waveforms will catch more errors before they become report material. SPS SOFTWARE fits that practice when you need open, physics-based models that you can inspect and revise with care. Good modelling isn’t about making the power system simulator look busy. It’s about making every result stand up to scrutiny.