Key Takeaways

- Research validity improves when model claims stay tied to measurable physics, so results remain stable across operating points and test conditions.

- Model credibility grows when equations, parameters, units, and assumptions are transparent enough for peers to audit and reproduce without guesswork.

- Academic confidence comes from disciplined verification, calibration, and validation, plus a deliberate choice of fidelity that matches the study’s claim.

Research validity lives or dies on one simple question: can someone else follow your assumptions and get the same system behaviour when they test it. A 2016 survey found 70% of researchers had tried and failed to reproduce another scientist’s experiments. That gap is rarely about effort alone. It often comes from models that hide assumptions, blur units, or rely on tuning that cannot be justified outside one dataset.

Physical modelling fixes that failure mode because it forces every claim to pass through conservation laws, component limits, and measurement definitions. You still need calibration and good data, but the model starts from constraints you can explain and audit. When you can point to the equation, the parameter source, and the test that anchors each behaviour, confidence stops being a feeling and becomes a traceable argument.

“Physical modelling improves research validity because your model’s claims stay tied to measurable physics.”

Physical modelling ties assumptions to measurable system physics

Physical modelling improves research validity when your assumptions are expressed as quantities you can measure, check, and reason about. Equations connect inputs to outputs through conservation of energy, charge, and momentum, plus component laws. Units must balance. Boundary conditions must be declared. Those constraints make silent guesswork harder to hide.

That constraint matters because it limits the number of ways a model can be “right for the wrong reason.” A curve-fit can match a plot while misunderstanding what causes the response. A physics-based model must represent the mechanism that produces the response, so later changes in operating point, topology, or control logic still follow the same rules. You get clearer limits on where the model is valid, not just a nicer match on one case.

Physical modelling also improves communication across roles. You can hand a model to a lab team, a reviewer, or a new student and talk in the shared language of parameters, tolerances, and test conditions. That lowers friction during peer review because the model becomes inspectable, not mysterious. It also makes gaps obvious, which is exactly what research credibility needs.

Research validity improves when model behaviour matches test evidence

Model credibility rises when simulated behaviour matches test evidence under clearly stated conditions. The match must cover the behaviours that matter to your claim, not only steady-state averages. Transients, saturation, switching effects, and control limits need attention when they affect outcomes. Validity improves when you can show how the same assumptions predict multiple measurements.

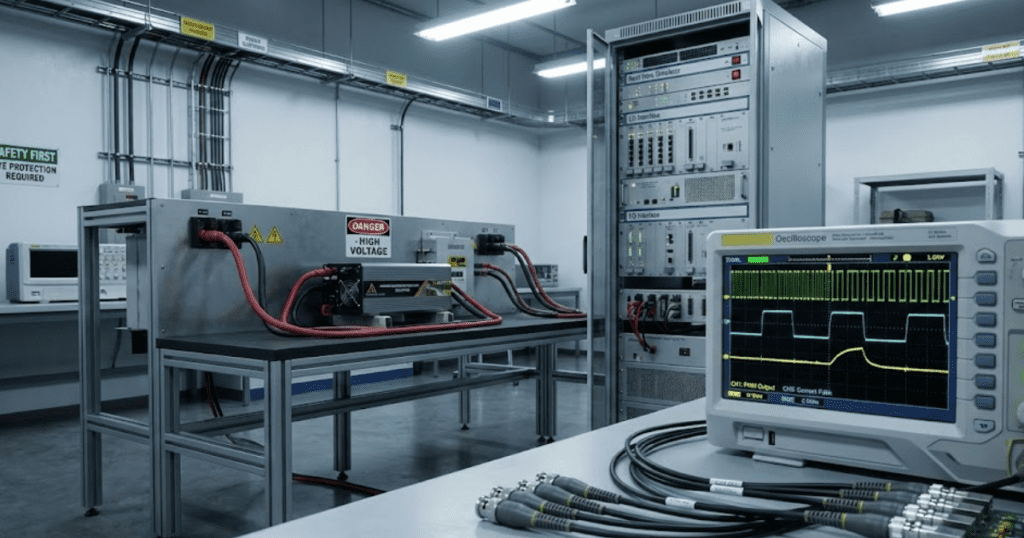

A concrete workflow looks like this: you build a physics-based model of a grid-tied inverter and its filter, then run the same load-step and setpoint-change sequences you run on a bench setup. Measured waveforms and simulated waveforms get compared using agreed metrics such as rise time, overshoot, and harmonic content, with the measurement bandwidth and sampling made explicit. When discrepancies appear, you adjust only parameters that have a physical meaning and a traceable basis.

This approach protects you from accidental confirmation. If a tweak improves one plot but breaks another, that failure is useful information about missing physics or wrong assumptions. The payoff is practical: reviewers see that the model is not only tuned to pass one test, it is structured to explain why behaviour happens. That is the link between system behaviour accuracy and research validity.

Model clarity builds academic confidence through transparent equations and parameters

Model clarity supports research credibility when every equation, parameter, and default is visible and easy to trace. Clarity means you can explain where each number comes from, what it represents physically, and how sensitive results are to it.

“Academic confidence follows because peers can audit your reasoning instead of trusting a black box.”

Clarity usually fails in small ways that add up. Hidden initial conditions, unnamed gains, and mixed units create “ghost tuning” that cannot be defended. A clear model uses consistent units, explicit reference frames, and readable blocks or code. Parameter sets stay separate from equations so a reviewer can see what is fundamental and what is specific to one setup.

Execution also matters. Platforms that keep component equations open and editable make it easier to document what you changed and why, which helps reproducibility when projects move across teams. SPS SOFTWARE supports this style of work through transparent component models you can inspect and adjust, which pushes modelling conversations back toward physics and away from unexplained magic numbers.

| What reviewers can check quickly | What it does for research validity |

| Units and reference frames stay consistent end to end | Reduces hidden scaling errors that can mimic “good” results |

| Each parameter has a source and physical meaning | Makes tuning defensible and transferable across test setups |

| Assumptions and boundary conditions are written explicitly | Shows where results apply and where claims stop applying |

| Defaults and initial conditions are visible and justified | Prevents accidental bias from undocumented starting states |

| Sensitivity checks identify which parameters matter most | Focuses validation effort on the levers that change outcomes |

Calibration and verification methods that raise model credibility

Model credibility improves when you separate verification from calibration and treat both as disciplined steps. Verification checks that equations are implemented correctly and numerics behave. Calibration adjusts physically meaningful parameters to match measurements. Validation then tests predictions on cases not used for calibration, which is where research validity becomes defensible.

Replication work shows why this discipline matters. A large replication effort reported that only 36% of replicated studies produced statistically significant results consistent with the originals. Physical modelling does not remove that risk on its own, but it reduces the surface area for untracked tuning because calibration can be constrained to parameters you can justify and measure.

- Run verification tests that target conservation laws and limiting cases

- Lock solver settings and document step sizes and tolerances

- Calibrate only parameters with a physical interpretation and trace

- Validate against measurements not used during calibration

- Report uncertainty from sensors, sampling, and parameter tolerances

These steps also make your work easier to defend during review. Questions shift from “why should we trust your model” to “which assumptions control the result,” which is a better scientific conversation. It also helps your team maintain the model over time because changes can be tested against a known set of checks.

Common failure modes that reduce system behaviour accuracy

System behaviour accuracy drops when modelling shortcuts hide the true mechanism or when numerics distort the response. The most common failure is mixing physical modelling with unconstrained tuning until the model matches one plot but loses meaning. Another failure is leaving solver and initialization choices undocumented, which makes results fragile and hard to reproduce.

Parameter misuse is another quiet issue. A resistance or inductance pulled from a datasheet can be valid only for a specific frequency or temperature, and a controller gain can depend on sampling and delays that are not represented. Unit errors also persist longer than teams expect because the output still “looks reasonable.” Physical modelling helps, but only if you treat unit checks and boundary conditions as non-negotiable.

Measurement mismatch can also look like a modelling error. If the sensor bandwidth, filtering, or timestamp alignment differs between test and simulation, you will chase the wrong parameter. Credible research work treats the measurement chain as part of the comparison, not a footnote. That mindset keeps your calibration honest and your conclusions tighter.

How to choose fidelity and scope for credible studies

Credible studies pick a model fidelity that matches the claim you want to support, then prove that fidelity is sufficient with targeted checks. Fidelity is not a virtue on its own. A model that is too simple will miss limiting effects, but a model that is too detailed will hide assumptions, inflate tuning effort, and make verification harder.

Start with the output you need to trust, then work backward to the physics that controls it. If the claim depends on a transient limit, represent the dynamics that set that limit and keep other parts as simple as possible. If the claim depends on losses or thermal margins, focus detail where dissipation is computed and measured. This scope discipline also protects timelines, because you spend effort where it affects validity rather than spreading it across every component.

Academic confidence grows when you can say, plainly, “this model is detailed here because it changes the answer, and simplified here because it does not.” Tools that keep models transparent and editable support that discipline, and SPS SOFTWARE fits best when you want physics-based clarity without hiding equations behind closed blocks. The strongest research credibility comes from that habit of disciplined modelling, careful testing, and honest limits.