Key Takeaways

- Coupled electrical loss and thermal path modelling will expose peak junction temperature and device stress that average efficiency numbers hide.

- Switch loss modelling becomes reliable when it uses operating-condition inputs and feeds a calibrated RC thermal network with explicit cooling boundaries and derating limits.

- Validation against measurable temperatures and careful handling of temperature-dependent parameters will prevent optimistic results and support defensible thermal margins.

Loss estimates that ignore temperature rise will understate device stress, hide thermal derating limits, and push designs into avoidable failure modes. A simple reliability heuristic shows why engineers can’t treat temperature as a secondary detail: a Q10 value of 2 means a process rate doubles for a 10°C rise. Switching loss and junction temperature interact in exactly that compounding way.

“Accurate power electronics models must treat heat and switching as coupled effects.”

Good modelling does not mean maximum complexity. It means choosing loss and thermal detail that matches the decisions you need to make, then keeping the model consistent from electrical waveforms through to junction temperature. When you connect those layers cleanly, you can size cooling, set safe operating limits, and justify stress margins with numbers you can defend.

Start with loss and thermal paths you must model

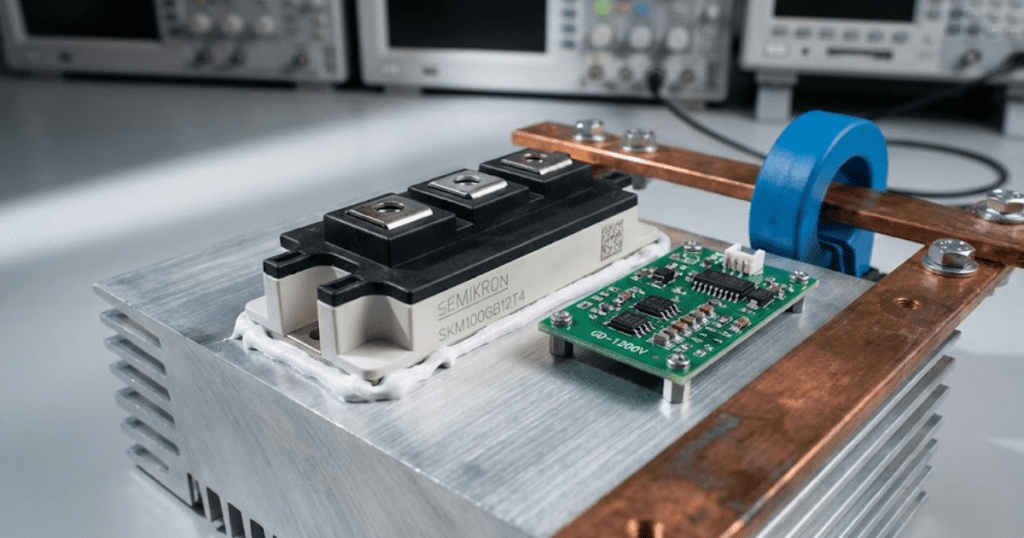

Start by mapping where power turns into heat and how that heat leaves the device. You need a loss model that produces watts under the same conditions your converter will see, plus a thermal path model that turns watts into junction temperature. If either side is missing, the model will look stable while the hardware runs hot. The best starting point is a power balance you can check at every operating point.

Most teams get better results faster when they define a small set of “must-model” paths before tuning any parameters.

- Switch conduction loss based on current and on-state voltage behaviour

- Switching loss based on switching energy and switching frequency

- Diode reverse recovery loss or channel conduction during commutation

- Junction to case thermal impedance and its transient shape

- Case to heatsink and heatsink to ambient thermal resistance

Thermal paths are only as accurate as their boundary conditions. Ambient temperature, airflow assumptions, mounting torque, and interface material choice will move case temperatures enough to invalidate a careful switching model. Keep the first pass simple, then tighten the pieces that change a decision, such as heatsink sizing or current limit strategy.

Model conduction and switching losses across operating conditions

Conduction and switching losses should be modelled as functions of current, voltage, switching speed, and temperature, not as fixed constants. Conduction loss is usually a voltage drop or resistance curve, while switching loss is best represented through switching energy values that scale with current and bus voltage. You’ll get the most useful results when your loss model responds to the same waveforms your control produces. That alignment turns a simulation from “average watts” into stress you can manage.

Switch loss modelling usually starts with datasheet energy curves, then adds the conditions your design changes: gate resistance, deadtime, and commutation path inductance. Those details matter because switching losses often rise when you make switching edges slower for EMI reasons, while conduction losses rise when you accept higher current ripple for smaller magnetics. A good model keeps those tradeoffs visible instead of hiding them inside a single efficiency number.

Granularity is a choice. Average-loss models work well for heat sink sizing and steady operating points, while cycle-resolved loss accumulation is better for pulsed loads and short thermal time constants. Pick the simplest approach that still shows the peak junction temperature and the margin to your derating limits.

Link loss models to RC thermal networks and heatsinks

Connect electrical losses to a thermal RC network so your model produces junction temperature, not just power dissipation. A multi-pole thermal impedance captures both fast junction heating and slow case and heatsink warming, which is essential for pulsed operation. Use a structure that matches your available data, then keep node definitions consistent across the model. Once watts flow into the network, temperature behaviour becomes predictable and testable.

Foster networks are convenient when you’re fitting published transient thermal impedance curves, while Cauer networks are easier to interpret physically when you need temperatures at internal layers. Both can work if you preserve energy and you don’t mix parameter sources. Mutual heating matters for multi-switch modules, so shared baseplate and heatsink nodes should be explicit when devices are physically close.

SPS SOFTWARE users often treat the thermal network as a first-class part of the converter model, because transparent, editable RC blocks make it easier to trace which assumption set a temperature limit. That workflow also fits cleanly into MATLAB/Simulink pipelines where electrical and thermal subsystems need to stay synchronized.

| Model choice | What you can trust from results | Common failure mode when simplified too far |

| Fixed loss constants at one operating point | Rough steady heat sink sizing near that point | Peak junction temperature is missed during transients |

| Lookup tables for loss versus current and voltage | Efficiency and heating across a speed torque map | Wrong values appear when temperature changes strongly |

| Switching energy-based loss with waveform inputs | Loss sensitivity to control timing and commutation | Gate resistance and stray inductance effects are ignored |

| Single Rth and Cth thermal model | Slow thermal trends over many seconds or minutes | Short overload limits look safer than they are |

| Multi-pole thermal impedance with heatsink node | Peak and average junction temperatures under pulsed load | Bad boundary assumptions shift every temperature result |

Represent temperature-dependent parameters and thermal derating limits

Temperature behaviour becomes believable when electrical parameters change with temperature inside the same model. On-state voltage, on-resistance, diode drops, and reverse recovery behaviour all shift with junction temperature, which feeds back into losses and can create runaway if you’re not careful. Thermal derating should be represented as an explicit limit, not as a vague “safety factor.” Clear derating logic turns temperature outputs into actionable operating constraints.

Temperature dependence does not stop at semiconductors. Copper’s temperature coefficient of resistivity is about 0.0039 per °C, so busbars, windings, and shunts dissipate more as they warm, and that heat often sits close to the power module. A model that keeps copper losses fixed will understate enclosure heating and distort case temperature predictions.

Derating should reflect the device’s published limits and your packaging limits. Junction temperature caps, maximum case temperature, and maximum allowable current at a given heatsink temperature can all be represented as conditional clamps that your control or protection logic respects. That approach also makes it easier to discuss risk with non-specialists, because a limit is easier to interpret than a hidden margin inside a parameter.

Predict transient junction temperature and manage device stress margins

“Transient junction temperature is the number that ties switching loss modelling to device stress.”

Peak junction temperature, temperature swing, and the rate of temperature change all contribute to wear mechanisms in bonds, solder, and packaging interfaces. A model that only reports average temperature cannot tell you if a short overload is safe. Treat thermal time constants as part of the design, not as a detail for later validation.

A concrete way to apply this is a motor drive that sees short torque bursts: a step from moderate load to near-rated current for a few seconds, repeated many times per hour, will create temperature swings that look small at the heatsink but large at the junction. The electrical model provides current ripple and switching frequency, the loss model converts those into watts per device, and the RC thermal network shows peak junction temperature during each burst. That output lets you set an overload timer and current limit that protects the device without giving up normal performance. It also shows when a “safe” average loss still causes damaging thermal cycling.

Stress margin should be expressed in terms you can track. Keep a clear distance to maximum junction temperature, but also watch repetitive temperature swing and current overshoot during commutation. Small changes to deadtime, gate resistance, or snubbering can cut switching losses while increasing voltage stress, so the margin you manage needs to include both thermal and electrical limits.

Validate models and avoid common thermal switching modelling errors

Validation should focus on removing the most common mismatches between simulated and measured temperature behaviour. Loss models must use the same reference conditions as the curves they came from, and thermal models must match how the device is mounted and cooled. Treat every parameter as “guilty until checked” when results look too optimistic. The goal is not a perfect model, but a model that fails in the same direction as the hardware.

Several errors show up again and again. Switching energy data is often applied outside its test voltage or gate drive, then scaled linearly when the physics is not linear. Thermal impedance curves are sometimes converted incorrectly between junction-to-case and junction-to-ambient, which bakes in the wrong boundary assumption. Temperature-dependent loss feedback is frequently omitted, which makes thermal derating look less necessary than it is.

Disciplined modelling means choosing a consistent loss basis, wiring it into a thermal network that matches packaging, and validating the full chain against temperatures you can measure. SPS SOFTWARE fits that discipline well when you need transparent, editable models that you can inspect, tune, and teach from, because clarity keeps teams aligned on what the numbers mean. Results that hold up over time come from tight assumptions and careful validation, not from extra complexity.