Key Takeaways

- Pick switching detail based on the decision you need to make, since ripple, peaks, and harmonics only become trustworthy when the model actually represents switching behaviour.

- Choose timestep from the fastest behaviour you will interpret, then prove it through convergence checks so peak stress, ripple, and losses do not depend on step size.

- Control runtime through targeted detail and careful output sampling, since coarse storage or misaligned switching events can hide aliasing and create false low-frequency effects.

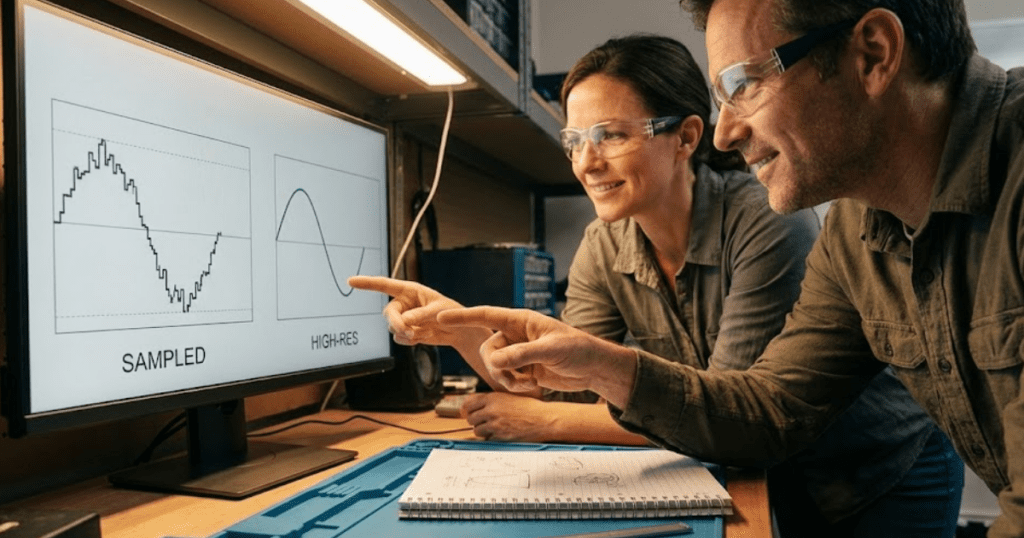

Switching models create the waveforms you see on a bench, but they also create the hardest numerical problem you’ll give a simulator: sharp edges, wideband harmonics, and stiff energy storage. Sampling theory sets the tone here, since representing a signal without aliasing requires a sample rate above 2x the highest frequency of interest. A timestep choice is simply that sampling choice expressed in seconds.

Averaged models and switching models are not competing “accuracy levels.” They are different instruments. The most reliable results come from pairing the model detail to your study question, then selecting a timestep that resolves the fastest behaviour you care about, not the fastest behaviour that exists anywhere in the schematic.

“Your converter simulation will only be as trustworthy as your switching detail and timestep.”

Choose switching or averaged converter models based on study goals

Use a switching model when you need ripple, peaks, harmonic content, device stress, or detailed interaction with protection and parasitics. Use an averaged model when you need control behaviour, steady-state operating points, slow transients, or system studies where switching ripple will only obscure the answer. The right choice is the one that matches the decision you need to make.

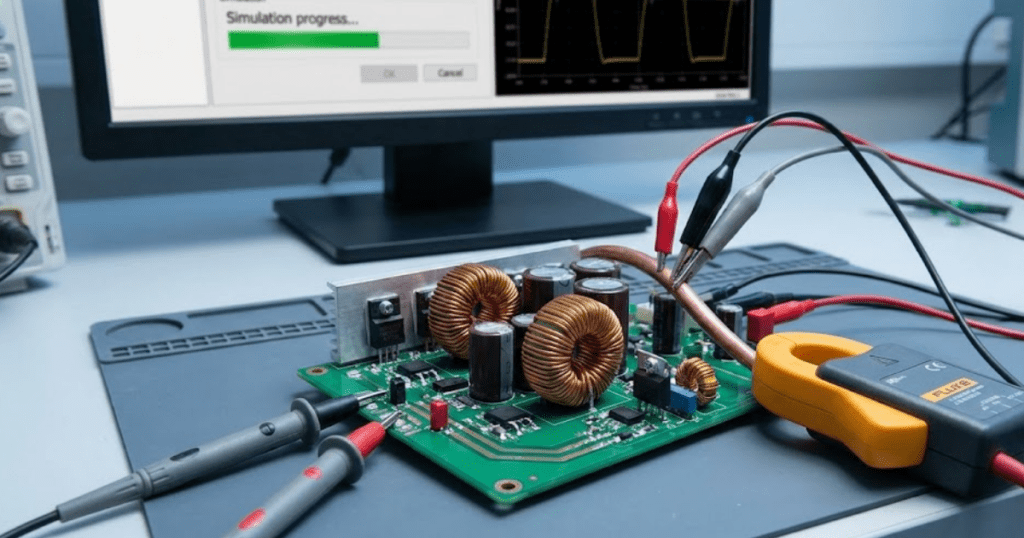

Switching models represent the discrete on-off states of semiconductor devices, so they naturally produce PWM ripple, diode recovery effects, and high dv dt and di dt edges. That fidelity matters for capacitor ripple current, transformer flux swing, filter damping, and controller sampling effects, because these depend on instantaneous waveforms and not just their averages. It also matters any time you need peak values rather than rms, since peaks often set thermal and reliability limits.

Averaged models replace the switch network with a controlled source or equivalent duty-cycle dependent relation. That removes the carrier frequency content, which usually makes the simulation stable at much larger timesteps and lets you study longer windows. If your goal is grid level interaction, droop response, start-up sequencing, or tuning a loop bandwidth, an averaged model will answer faster with fewer numerical traps.

Identify what switching detail changes in key waveforms and losses

Switching detail changes what your model treats as “real” in the electrical sense: ripple, harmonics, and peak stresses become explicit signals instead of being implied. That directly affects predicted conduction loss, switching loss, ripple heating in magnetics and capacitors, and any control logic that depends on sampled currents and voltages. Averaging removes the carrier and reshapes these outcomes.

Ripple is not cosmetic. A small change in ripple current can move a capacitor from acceptable temperature rise to chronic overheating, and the same ripple can excite resonances in filters and cables that never show up in an averaged model. Harmonics also matter outside of power quality reporting, since compliance work often spans far above the fundamental and even above the switching frequency through its harmonics.

One useful reference point is conducted emissions practice, since disturbance limits are assessed from 150 kHz to 30 MHz in CISPR 11. A switching model will generate content that reaches into that range if your edges are fast enough or your parasitics are represented, and your timestep choice will decide which part of that spectrum is credible. If you smooth the switching detail too aggressively, you will still get a “clean” waveform, but it will be clean for the wrong reason.

Set simulation timestep from switching frequency and control bandwidth

A practical timestep starts from the fastest behaviour you need to resolve, then adds margin so numerical integration does not smear edges or shift phases. For switching models, that behaviour is usually the PWM carrier period, dead time, and any resonant ringing you intend to keep. For averaged models, the fastest behaviour is usually the control bandwidth and the dominant plant poles.

Consider a 20 kHz PWM converter where you care about inductor ripple current and switch peak current during transients. The switching period is 50 µs, so a timestep around 0.5 µs gives 100 points per period and usually captures ripple shape without turning every edge into a stair-step. If your model includes 200 ns dead time or a few MHz ringing that you want to see, that timestep is no longer adequate, and the timestep must shrink until those features stop shifting as you refine it.

Control adds a second constraint. A digital controller with a kHz scale bandwidth can look stable with a coarse timestep and still be wrong in phase margin once sampling and modulation delays are represented. The safest workflow is to tie timestep to the highest frequency you will interpret in plots or metrics, then verify convergence by halving the timestep and checking if key outcomes, such as ripple amplitude and peak device current, settle to a consistent value.

| What you need from the simulation | Model detail that supports that need | Timestep checkpoint that keeps results believable |

| Loop tuning and slow transients over seconds | Averaged converter with explicit control and limits | Timestep resolves control bandwidth and dominant plant dynamics, not the PWM carrier |

| Ripple current, peak stress, and harmonic structure | Switching model with PWM and device states | Timestep provides many points per switching period so ripple and peaks stop shifting when refined |

| Protection timing and threshold crossings | Switching model if thresholds depend on instantaneous ripple | Timestep is small enough that threshold events happen at consistent times across refinements |

| Filter resonance and cable interaction | Switching or averaged depending on resonance frequency of interest | Timestep resolves the resonance frequency with comfortable phase accuracy, not just amplitude |

| Energy and loss accounting you will use for thermal checks | Switching model if losses depend on ripple and edge timing | Timestep is tight enough that integrated loss per cycle converges and does not drift with step size |

Use numerical stability checks to confirm timestep is small enough

A timestep is “small enough” when your outcomes converge and the solver stays stable without artificial damping. Convergence means the values you care about change negligibly when you halve the timestep, not that the waveforms look smooth. Stability means energy does not grow without a physical reason and oscillations match circuit physics rather than numerical artefacts.

Start with two quick checks: run the same case with a smaller timestep and compare a small set of metrics, then inspect for non-physical behaviour such as negative losses, oscillations that appear only at one step size, or ringing that shifts frequency as you refine. Peaks are often the first thing to move when the timestep is too large, since they can be clipped or time-shifted without obvious warning. When you see instability, treat it as a modelling signal, since parasitic inductance, ideal switches, and stiff control actions can make the system numerically harsh even if the topology is fine.

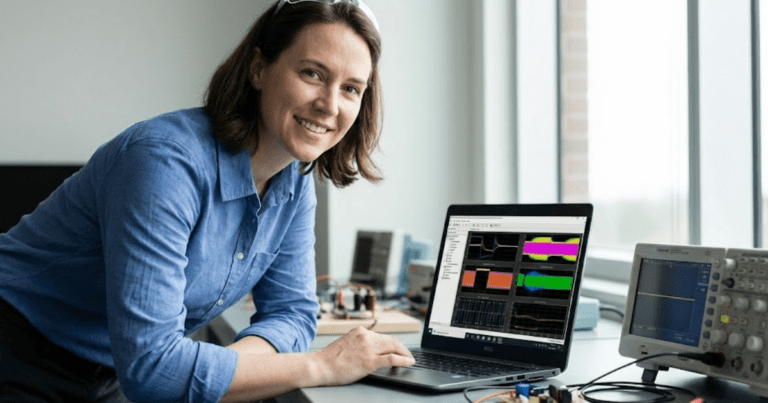

Tooling helps when it stays transparent. SPS SOFTWARE supports open, editable component models, so you can inspect the equations, identify stiff elements, and decide if you should add practical damping, refine parasitics, or reduce timestep around the parts of the network that create the fastest dynamics. That workflow tends to beat trial-and-error because you learn which physics created the numerical problem.

Balance runtime and accuracy with local refinement and event handling

Runtime control comes from putting resolution where it matters and relaxing it where it does not. Switching transitions and high-frequency resonances need small timesteps, but many parts of a power system model evolve slowly. A balanced setup focuses fine resolution around converters and sensitive nodes, then uses coarser resolution elsewhere when the simulator supports it.

Local refinement is most effective when it aligns with a measurement need. If you only care about grid voltage distortion at the point of common coupling, you can keep detailed switching inside the converter and use reduced detail or aggregation on distant feeders. If you care about device stress, you keep the detail near the devices and avoid spending compute time on far-field dynamics that will not influence peaks within a switching period.

Event handling matters because switching is discontinuous. If your simulator models gating events explicitly, you want those events to land on consistent times, or your duty cycle becomes timestep dependent. If your simulator uses adaptive stepping, you still need guardrails so the step does not grow large through an interval where ripple is being interpreted. The goal is not a “fast run,” it is a run where each second of compute produces information you can defend.

“The most defensible practice is to write down what you need to measure, then prove your timestep can measure it.”

Avoid common timestep mistakes that hide ripple and aliasing

Most bad converter results come from a few repeatable missteps that make plots look reasonable while key metrics drift. Aliasing is the most dangerous, since it turns high-frequency content into low-frequency artefacts that look like control issues or resonance. A disciplined setup treats timestep, output sampling, and switching logic as one system.

- Choosing a timestep that gives too few points per switching period, then trusting ripple amplitude and peak current

- Saving waveforms at a coarse output interval that aliases switching ripple into fake low-frequency oscillations

- Using ideal switches with no parasitics, then compensating with an overly large timestep that acts like hidden damping

- Allowing switching events to fall between timesteps so effective duty cycle shifts with step size

- Validating only average values, then missing that peaks and losses have not converged

That proof can be simple, such as halving the timestep until peak values, ripple, and integrated loss stop moving in a meaningful way. Once you do that a few times, you’ll start spotting when a model is too detailed for the study goal, or too averaged to support a hardware-facing decision.

SPS SOFTWARE fits best when you treat modelling as an engineering discipline and not a black box run. Transparent models make it easier to explain why you picked a switching model, why you selected a timestep, and why the results will hold up when someone asks what changed when the step size changed. That habit is what turns converter simulation from “looks right” into “is right enough to act on.”