Key Takeaways

- Digital testing confidence comes from validated models that set expected ranges, limits, and pass criteria before any hardware stress.

- Pre-test insights are most useful when they prioritise operating corners and the minimum measurements needed to prove or disprove key assumptions.

- Reliable hardware testing improves when teams treat model mismatches as structured feedback, then update parameters, limits, and test sequences with discipline.

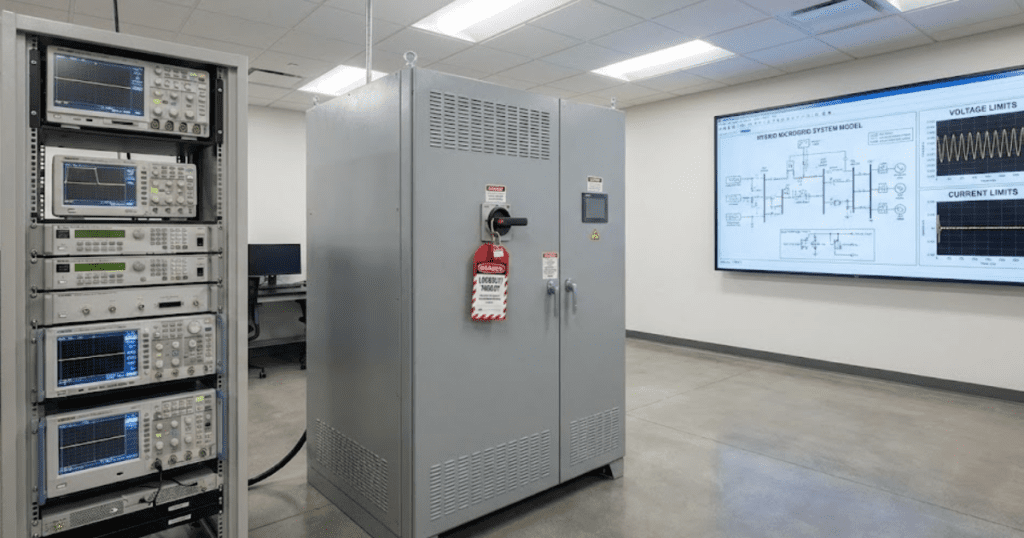

Hardware testing in power systems and power electronics fails when you treat first power-up as a discovery exercise. A model that matches your system’s physics turns testing into confirmation, because you arrive with expected waveforms, limits, and pass criteria instead of guesses. That matters because a single bad test can damage equipment, delay schedules, and put people at risk. Power interruptions alone cost the U.S. economy about $44 billion per year, and poor validation upstream is one way those costs show up downstream.

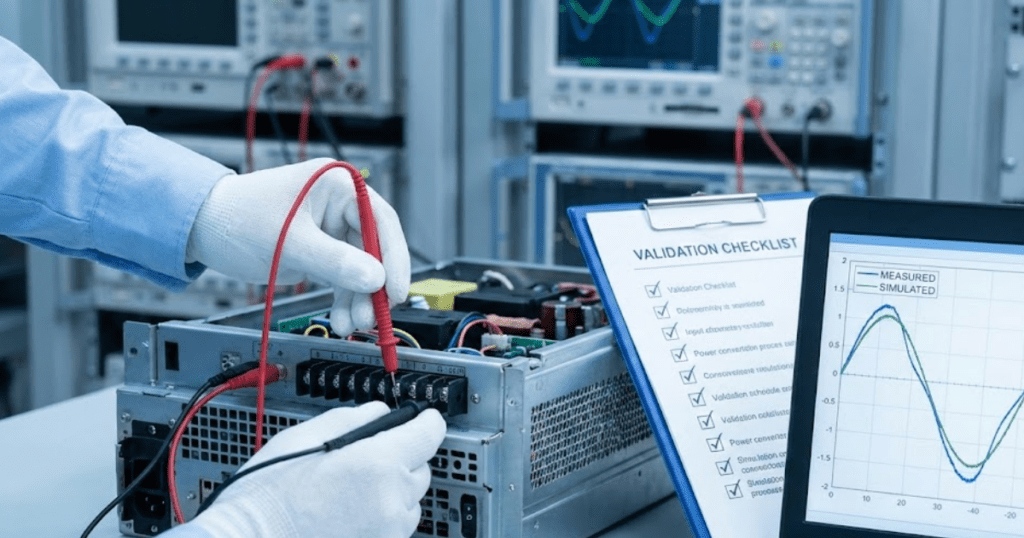

Digital testing confidence comes from disciplined model validation, not from running more simulations. Accurate models help predict behaviour because they capture the right structure, parameters, and control logic, then prove those assumptions against what you can measure. When you use modelling to get pre-test insights, you decide what to measure, what to limit, and what to try first, before any risky switching or fault work starts. The result is fewer surprises, cleaner test data, and faster root-cause work when results differ from expectations.

“Validated digital models make hardware tests more predictable and safer.”

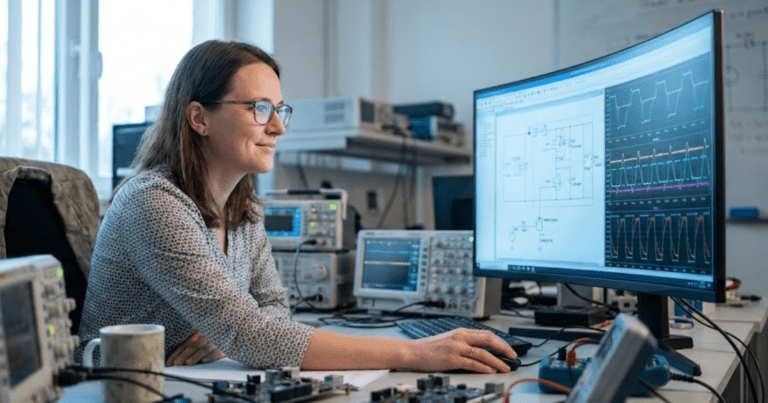

Digital models set test expectations before hardware power-up

A digital model supports hardware testing when it defines expected signals and limits before you apply power. You use it to predict steady-state values, transient ranges, and protection thresholds. That gives you a baseline for judging anomalies during commissioning. It also reduces risk because you can pre-plan current, voltage, and thermal margins.

A practical case is a lab team preparing to commission a 250 kW grid-forming inverter feeding a small microgrid bus. The first simulation run uses the intended filter values, controller gains, and a range of grid impedances that could exist at the point of connection. You walk into the lab knowing the expected inrush, the settling time after a load step, and the waveform quality at the terminals. If the measured current spikes exceed the model’s upper bound, you stop and investigate the setup rather than pushing ahead.

Test expectations work best when they’re written down as checkable statements, not as plots you glance at once. You’ll also get more value if you treat the model as a contract between design, controls, and test teams, with a clear list of assumptions that can be challenged. That mindset keeps the model from becoming a “nice to have” file that nobody trusts under pressure. It also forces a system behaviour study to stay tied to measurements you can actually take in the lab.

| Model output you should have | Checkpoint you set before first power-up | Why it makes testing more reliable |

| Expected steady-state voltages and currents at key nodes | Instrument ranges and alarm limits match predicted operating bands | You avoid saturating sensors and you spot abnormal conditions early |

| Step response to load changes and setpoint changes | Pass criteria include settling time and overshoot limits | You separate tuning issues from wiring and measurement errors |

| Protection pickup levels and trip timing assumptions | Trip thresholds are reviewed with the model as a reference | You reduce nuisance trips and avoid unsafe test escalation |

| Loss and thermal estimates under test profiles | Cooling checks and run durations align to predicted heating | You prevent damage during long sweeps or repeated transients |

| Sensitivity to uncertain parameters such as impedance and delay | Worst-case corners are prioritized in the test plan | You find weak points early instead of late and expensive retests |

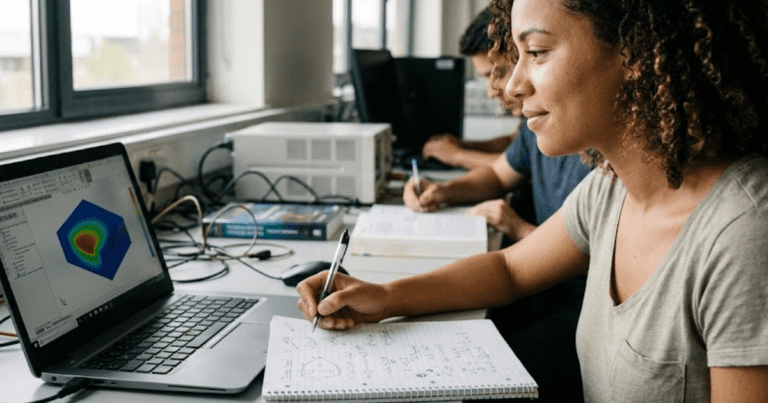

Pre-test studies find operating corners, limits, and needed measurements

Pre-test studies give you pre-test insights that shape what you test first and what you postpone. They identify operating corners where stability, protection, or thermal limits tighten. They also tell you which measurements will settle the biggest uncertainties. You gain confidence because your first hardware runs target the highest information value with the lowest risk.

That inverter commissioning case becomes manageable once the model sweeps the parameter ranges that you can’t know exactly on day one. You’ll see which combinations of grid impedance and controller gains create oscillations, and which ones stay well damped. You also learn where measurement quality matters, such as current sensor bandwidth during switching transients or voltage probe placement during fault tests. When the model flags a narrow stability margin, you plan smaller steps and shorter run times until the behaviour matches expectations.

- Grid or load impedance corners that push damping and stability limits

- Worst-case DC-link voltage and ripple under expected transients

- Peak phase current and di/dt that set safe ramp rates

- Protection coordination limits that affect trip timing and thresholds

- Signals that must be logged at high resolution for root-cause work

These studies will only help if you treat the results as test inputs, not as design trivia. If a sweep shows that a 10% change in delay shifts stability, you will prioritise validating timing paths and sampling assumptions. If a sweep shows that impedance uncertainty dominates, you will plan a quick impedance characterization step before aggressive testing. The point is simple: pre-test work earns its keep when it reduces the number of “unknown unknowns” you carry into the lab.

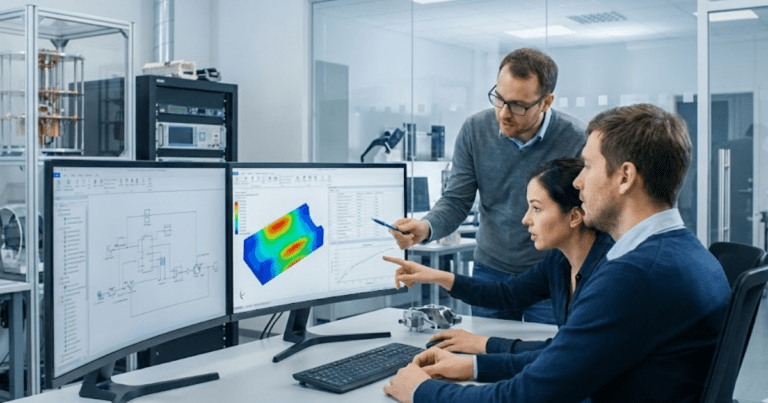

Model validation methods that build confidence in digital test results

Model validation builds digital testing confidence when you prove structure and parameters against measurements you can trust. You validate in layers, starting with component checks and moving to subsystem behaviour. Each check tightens uncertainty and reduces the chance of matching data for the wrong reason. The goal is a model that fails loudly when assumptions are wrong.

Inadequate software testing has been estimated to cost $59.5 billion per year in the U.S. economy, and control-heavy power hardware suffers from the same pattern of late, expensive discovery. Your validation plan should include basic conservation checks, timing checks, and sensitivity checks before you compare complex waveforms. If the model predicts energy creation or loss that violates physics, it’s telling you something is structurally wrong. If small parameter changes cause large output swings, you learn where measurement effort will pay back.

Transparent models help here because you can inspect equations and assumptions instead of treating blocks as opaque. SPS SOFTWARE supports physics-based modelling with editable component detail, which matters during validation because you can trace results to parameters you can measure and defend. You’ll still need to manage fidelity choices, since switching detail, numerical step size, and controller timing can all shift outcomes. Validation is not about making plots line up once; it’s about showing the model stays honest across the operating band you plan to test.

Accurate models predict system behaviour under faults and control changes

Accurate models predict behaviour under faults and control changes because they capture interactions, not just steady-state points. Faults expose coupling among control loops, protection logic, and network impedance. Control changes expose timing, saturation, and limit handling. When those mechanisms are represented correctly, the model becomes a reliable way to anticipate failure modes before hardware sees them.

The inverter commissioning scenario is a good stress test for model fidelity because the “interesting” behaviour often happens during abnormal events. A voltage sag can push current limits and trigger control mode changes within a few cycles. A close-in fault can drive protection trips, then create a restart sequence with inrush and synchronization steps. If the model includes realistic limits, delays, and trip logic, you can predict which event sequences are safe to attempt and which ones require additional interlocks.

Prediction does not mean perfect matching of every oscillation. It means the model gets the dominant mechanism right and predicts the direction and magnitude of change when you vary a condition. You’ll also learn which parts of the design are robust and which rely on tuned settings that drift with hardware tolerances. That clarity supports better test sequencing, because you can keep early runs inside well-understood regions and expand outward with control over risk.

Turn model outputs into test sequences, safety checks, and criteria

Model outputs become useful in the lab when they translate into a test sequence with clear stop rules. You map predicted ranges to instrument settings, interlocks, and pass criteria. You also use the model to order tests from low-risk, high-information runs to higher-stress cases. This turns testing into a controlled comparison between predicted and measured behaviour.

In the inverter case, the sequence typically starts with low-voltage functional checks, then low-power synchronization, then incremental load steps, and only then controlled disturbance tests. The model tells you what “normal” looks like at each stage, so you can gate progress on clear criteria such as waveform distortion limits, current peaks, or temperature rise over a fixed duration. If the measured response differs, you pause at the smallest test that still reproduces the mismatch, because that isolates causes faster than jumping to a harsher run.

This is also where you decide what to log and at what resolution. A model that predicts the key state variables helps you avoid collecting a pile of signals that won’t answer the hard questions later. You’ll also decide which parameters you will identify from early data, then push back into the model to tighten later predictions. That loop is the practical bridge between modelling and safe hardware execution.

Common modelling mistakes that reduce trust during hardware testing

“Hardware testing becomes more reliable once the model earns its role as the reference, and once teams agree that mismatches are learning opportunities, not reasons to abandon the process.”

Trust breaks when a model hides assumptions, skips limits, or treats unknown parameters as fixed facts. It also breaks when the model is too detailed to validate, so nobody can explain why it matches. A reliable workflow keeps the model simple enough to defend and detailed enough to predict the test outcomes you care about. That balance is a management choice as much as a technical one.

The most common failure mode is validating against a single “good looking” waveform while ignoring sensitivity and uncertainty. Another is leaving out saturations, dead time, sampling delay, or protection latch behaviour, then acting surprised when hardware reacts sharply. Poor alignment between measurement points and model variables is also a quiet problem, because you end up comparing signals that are not truly equivalent. When those issues stack up, engineers stop using the model for pre-test insights and revert to guesswork under schedule pressure.

Disciplined execution fixes this, and it’s more important than any one tool. You’ll get better outcomes when you treat validation as a checklist of falsifiable claims, keep assumptions visible, and update parameters based on early measurements. SPS SOFTWARE fits well into that style because transparent, physics-based models are easier to challenge and refine when the lab data disagrees.