Key Takeaways

- Reproducible EMT research starts when you treat the simulation run as a complete, rerunnable record that includes the model, numerics, inputs, and tool versions.

- Physics-based model transparency matters as much as results, because readers need to inspect equations, assumptions, and control logic to trust that the same study is being rerun.

- Most repeatability failures come from small, undocumented choices such as time step, event timing, initialization, and post-processing, so disciplined run manifests and portable study packaging should be standard practice.

Reproducible simulation research fails most often when authors treat a simulator run as a screenshot instead of a record you can rerun. A large survey found 70% of researchers had tried and failed to reproduce another scientist’s experiments. EMT research carries extra risk because small numerical and modelling choices can shift waveforms, trip logic, and protection outcomes.

“You can make EMT power system results repeatable when you publish the model, the numerics, and the run conditions as a single package.”

The practical stance is simple: reproducibility is a design requirement for your study, not a clean-up task after you’ve written results. Physics-based modelling makes that achievable because equations, parameters, and assumptions can be inspected and challenged. Your job is to keep every hidden decision visible, from solver tolerances to initial conditions, so a reviewer or lab partner can rerun the study and reach the same technical conclusions.

Define reproducible simulation research in EMT power system studies

Reproducible EMT research means an independent reader can run your simulation model and obtain the same key plots and metrics within a stated tolerance. It includes the full model, all inputs, and the numerical settings used to generate results. It also includes tool versions and any external scripts. It is stricter than claiming similar behaviour.

For EMT work, “same result” should be defined in engineering terms, not aesthetics. If your claim depends on peak current, DC link ripple, PLL stability, or protection pickup time, you need a numeric acceptance band for those outputs. That band should reflect numerical noise you expect from different machines, not the spread you get from undocumented parameter choices.

It also helps to separate three levels of repeatability so your readers know what to expect. Repeatable runs on the same computer test basic run control. Reproducing on a different computer tests tool versioning, floating point differences, and hidden dependencies. Reproducing in another simulator tests modelling assumptions, and that requires even clearer documentation of physics-based equations and control logic.

Specify model transparency requirements for physics-based power system modelling

Transparent physics-based models expose equations, parameters, and component limits so others can inspect what your study actually simulates. You should be able to trace any plotted waveform back to a component model and a parameter value. Control blocks must be readable, not compiled into opaque artefacts. If a value is tuned, the tuning target must be stated.

Start with a tight “model contract” that defines what is inside the scope and what is not. If you use an averaged converter model, state the switching details you removed and why that is acceptable for your claim. If you include detailed switching, state how you represent device losses, dead time, and saturation. Readers do not need every intermediate note, but they do need every assumption that changes physics.

Transparency also includes naming and structure. Consistent signal names, clear subsystem boundaries, and readable units reduce the risk that another researcher wires something incorrectly and blames the tool. When a model is clear enough for a graduate student to audit, it is usually clear enough for a reviewer to trust.

Control numerical settings that most often break reproducibility

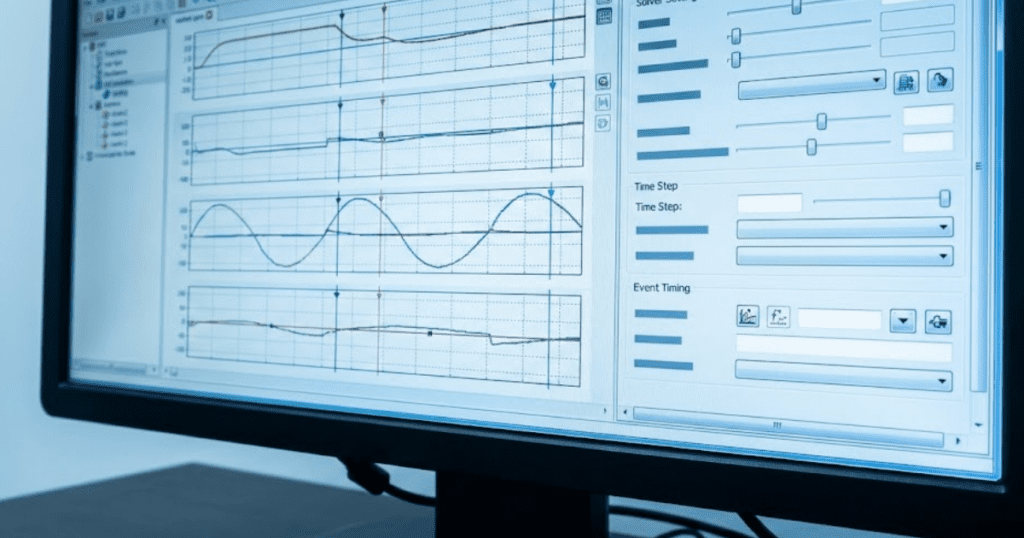

EMT reproducibility breaks when solver choices, time step, interpolation, and event handling are treated as defaults. Time step and tolerances directly affect switching ripple, control stability margins, and protection timing. Event timing rules, such as breaker operation and fault insertion, must be specified precisely. You should publish these settings as part of the study definition, not as simulator trivia.

Consider a grid fault study on a 2 MW inverter model where your claim depends on the first 10 ms of current limiting. A fixed time step of 5 µs can show a different peak and a different limiter activation instant than 20 µs, even with identical controller gains, because sampling, discretization, and switch event alignment shift. If the paper reports only the controller diagram and omits the numerical settings, another lab can “replicate” the model and still miss your headline result.

Set explicit rules for how you choose numerics. Start with a time step justified by the fastest dynamics you keep, then confirm key outputs are stable under a smaller step. State any filters or decimation used for plots so readers do not confuse display smoothing with physical damping. When your results depend on threshold crossings, record the detection method and the comparison tolerance.

Record inputs, initial conditions, and solver versions consistently

Repeatable EMT studies require a complete run record that captures every input, initial state, and tool version used. Initial conditions matter because controls, machine states, and network voltages can settle into different trajectories. Versioning matters because solvers, libraries, and numerical fixes change behaviour. If you can’t recreate your own figures six months later, nobody else will.

Use a run manifest that travels with the model and gets updated every time you regenerate results. Treat it like a lab notebook entry with strict fields, not free text. When you work with teams, a manifest becomes the shared reference that prevents quiet drift between “the model” and “the results.”

- Simulation tool name, exact version, and operating system details

- Solver type, fixed or variable step, time step, and error tolerances

- All input files with checksums and a single source of parameter values

- Initial condition method, including any power flow or steady-state pre-run

- Event schedule with timestamps for faults, switching, and controller mode changes

The same discipline applies to scripts used for plotting and post-processing. If a plot uses windowing, resampling, or filtering, record the settings and the code version. A clean run record turns review comments into quick reruns instead of weeks of reconstruction.

Package and share EMT studies so others can rerun

“Sharing for reproducibility means shipping a runnable bundle, not a diagram and a parameter table.”

A complete package includes model files, the run manifest, input datasets, and the plotting scripts that generate published figures. File paths must be relative and portable so the project opens on a new machine without manual repair. Your goal is a single command or click that reproduces the outputs you cite.

Packaging works best when you separate editable source from generated artefacts. Keep source models, parameter sets, and scripts under version control, and store generated plots in a results folder tied to a specific commit. Archive the exact run bundle associated with a submission so later edits do not overwrite the provenance of published figures.

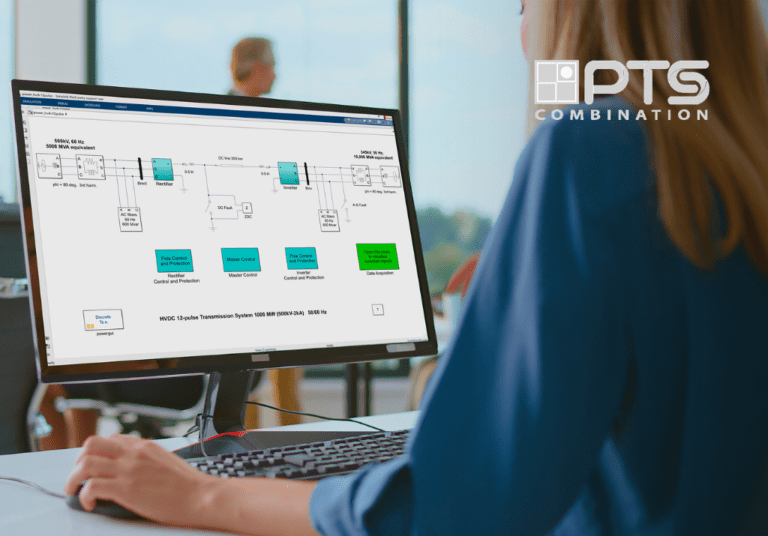

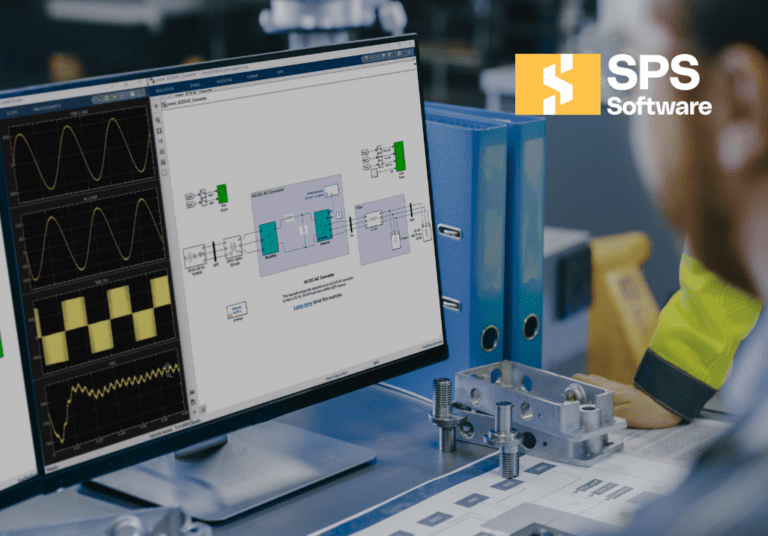

Some teams standardize this workflow inside SPS SOFTWARE because open, editable component models and clear parameterization make it easier to bundle what matters for reruns. The tool choice matters less than the habit: if the recipient cannot inspect and execute what you used, the study cannot be reproduced.

Detect common reporting gaps that block repeatable results

The fastest way to improve reproducibility is to look for gaps reviewers repeatedly hit: missing numerics, missing initial conditions, and missing event definitions. These omissions are not minor, because EMT outputs can shift with tiny differences. A separate survey finding showed 52% of researchers agree there is a significant reproducibility crisis. That pattern matches what power system reviewers see when simulation results can’t be rerun.

A simple self-test catches most issues before submission. Another person on your team should be able to clone the study bundle, run it on a clean machine, and regenerate every figure without asking you questions. If they need an email thread to find solver settings, a parameter file, or the exact event timing, the paper is not ready for scrutiny.

| Reproducibility checkpoint | What you must record | What a rerunner can verify quickly |

|---|---|---|

| Model transparency | Editable equations, readable control logic, and parameter sources | Every plotted signal traces to a model element and value |

| Numerical configuration | Solver type, step size, tolerances, and event timing rules | Key peaks and timing match within your stated tolerance band |

| Initial conditions | Pre-run method, power flow assumptions, and state initialization files | Startup transients and steady-state values align with reported baselines |

| Inputs and disturbances | Parameter sets, external data, and a timestamped event schedule | Faults, switching, and mode changes occur at identical times |

| Provenance and packaging | Tool versions, run manifest, and portable file structure | The study runs on a clean machine without path fixes |

Good reproducibility feels strict, but it pays off in calmer review cycles and cleaner internal handoffs. Teams that treat modelling as a publishable artifact, not a personal workspace, build credibility that accumulates over time. SPS SOFTWARE fits best when you want that discipline supported by transparent, inspectable physics-based models, yet the outcome still depends on your run records and packaging habits.